Modern businesses face a critical challenge: artificial intelligence solutions that promise transformation but deliver fragmentation. Models trained in isolation, data pipelines that break at integration points, and systems that cannot adapt when operational reality shifts. The answer lies in end to end ai-a holistic approach that connects every stage of the AI lifecycle into a single, coherent flow. Instead of building sophisticated models that fail when they meet real-world operations, end to end ai ensures that data collection, model training, deployment, monitoring, and continuous improvement work as one unified system. This approach transforms AI from a collection of experiments into a reliable operational asset.

Understanding End to End AI Architecture

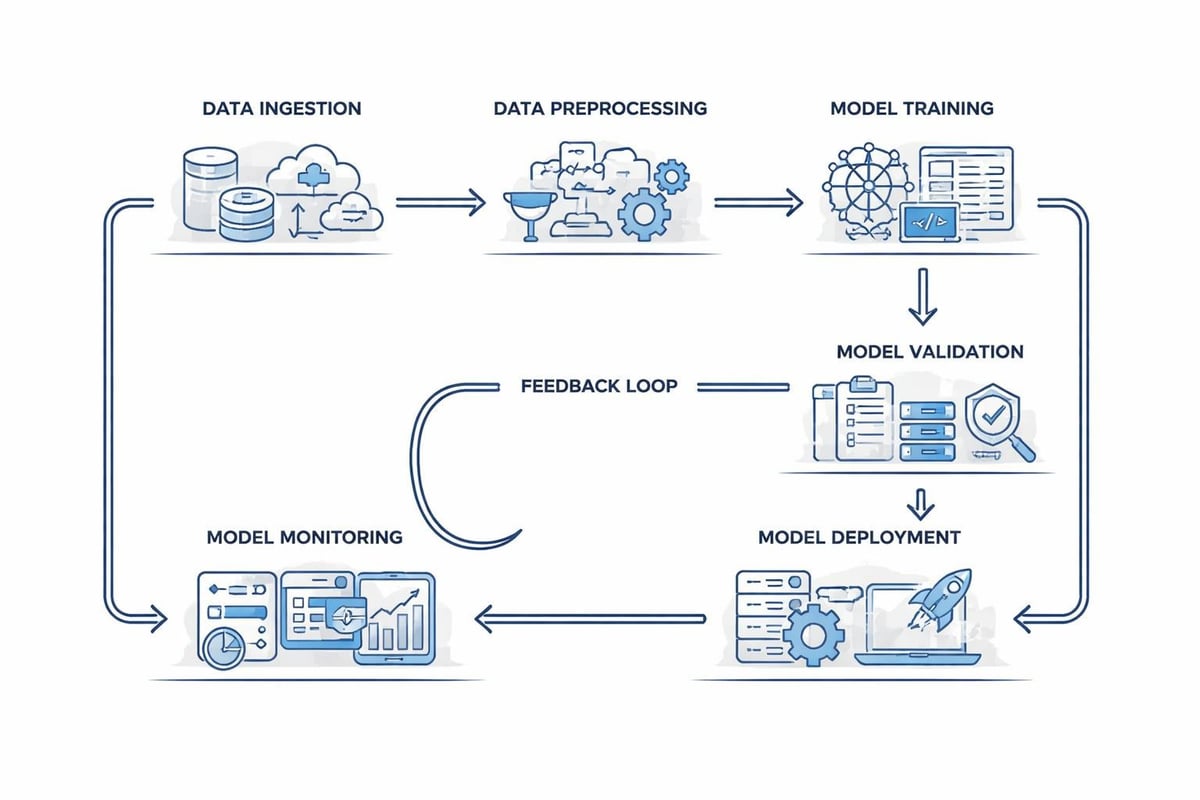

End to end ai represents a fundamental shift in how organizations build and deploy intelligent systems. Rather than treating machine learning as a discrete project with a beginning and end, this methodology creates continuous loops where models learn from operational feedback and improve over time.

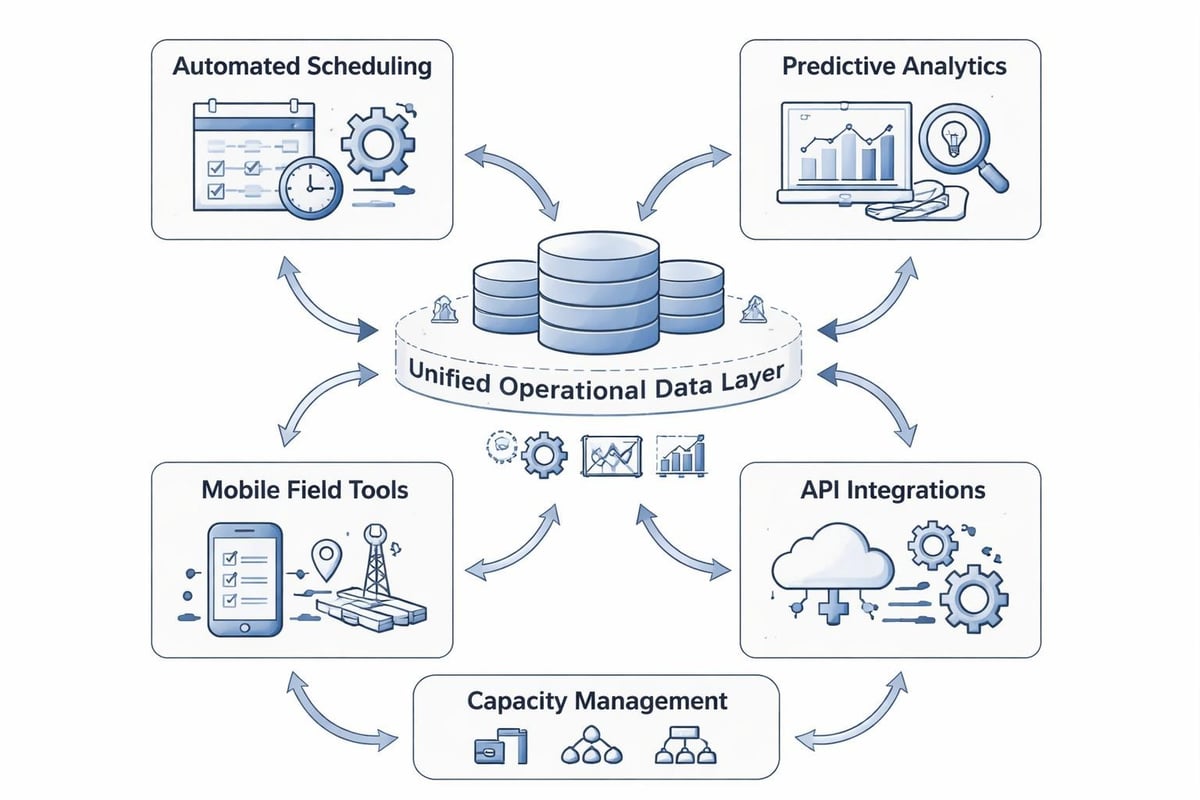

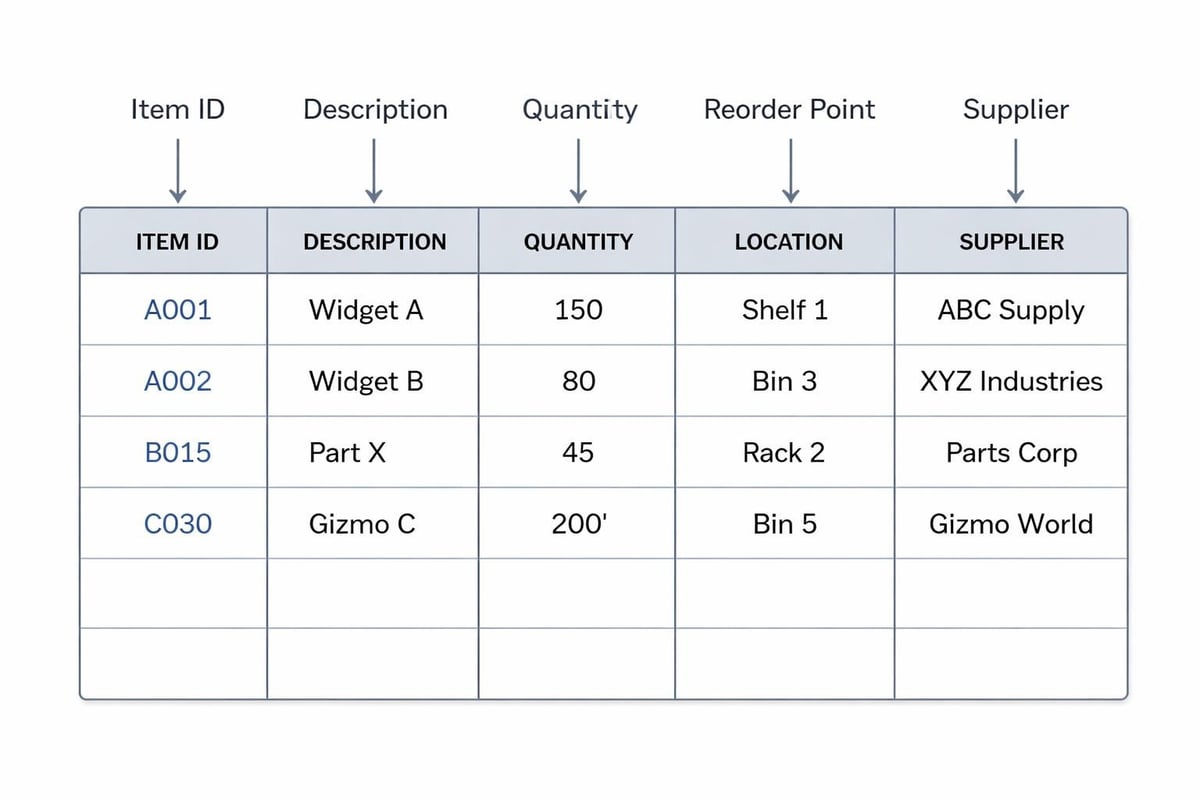

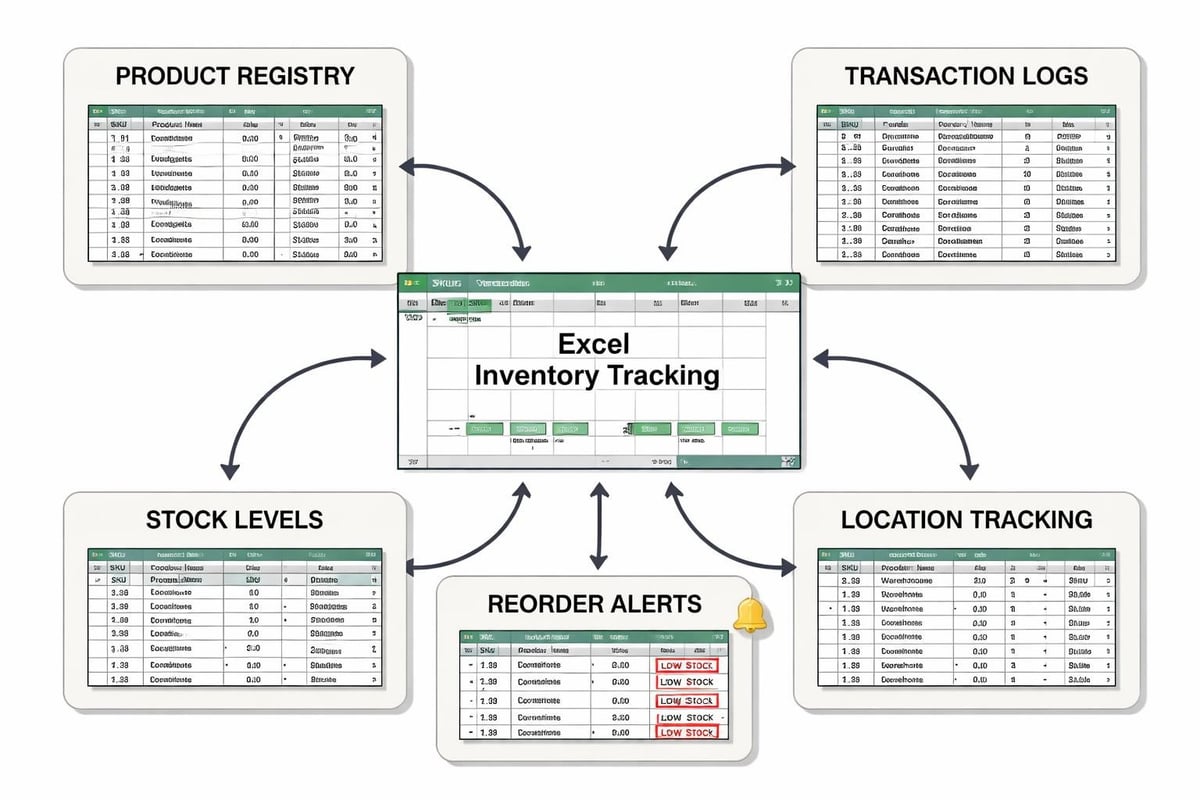

The architecture starts with data infrastructure. Raw inputs from sensors, customer interactions, field operations, and business transactions flow into centralized repositories. Data quality matters more than volume: inconsistent formats, missing timestamps, or siloed sources create blind spots that compromise every downstream process.

Core Components of Integrated AI Systems

A complete end to end ai implementation requires several interconnected layers working in harmony:

- Data ingestion and normalization across multiple sources and formats

- Feature engineering pipelines that transform raw data into model-ready inputs

- Training infrastructure with version control and experiment tracking

- Validation frameworks that test models against real operational scenarios

- Deployment orchestration connecting models to live business processes

- Monitoring systems that detect drift, performance degradation, and edge cases

- Feedback mechanisms that route predictions, outcomes, and exceptions back into training

Research from IBM demonstrates how end to end AI workflows can be implemented using modern orchestration tools, highlighting the importance of infrastructure as code and MLOps practices. The integration reduces handoff friction and keeps models synchronized with operational needs.

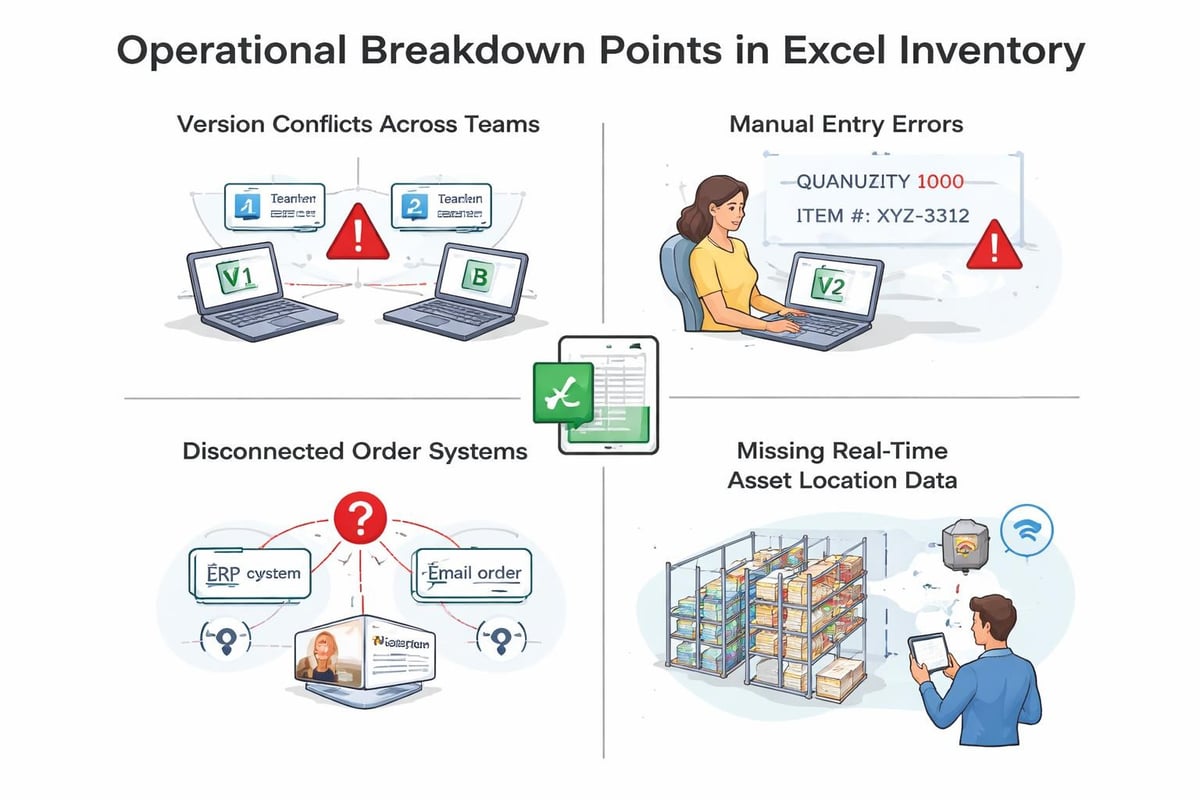

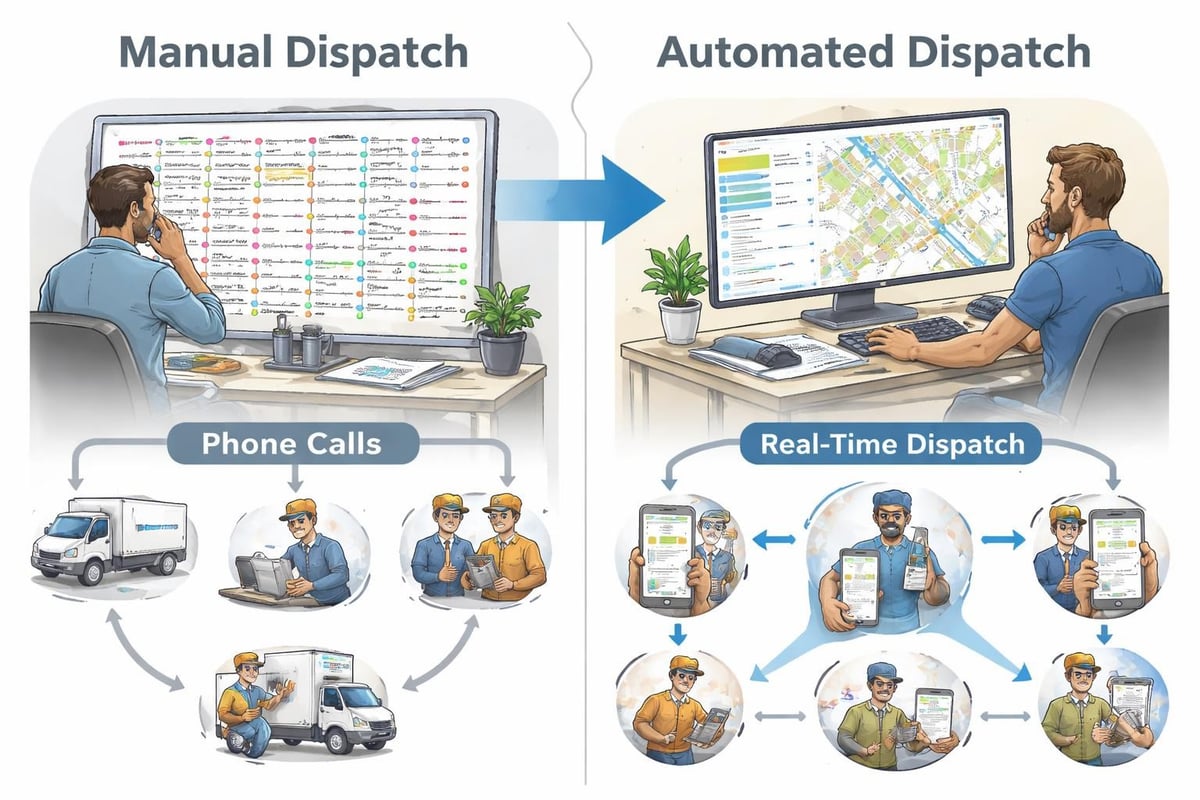

Traditional approaches break at the boundaries between these stages. Data scientists build models in notebooks, engineers struggle to productionize them, operations teams receive predictions they cannot act on, and no one captures whether the AI actually improved business outcomes. End to end ai eliminates these gaps by designing for the complete lifecycle from day one.

Business Operations and AI Integration

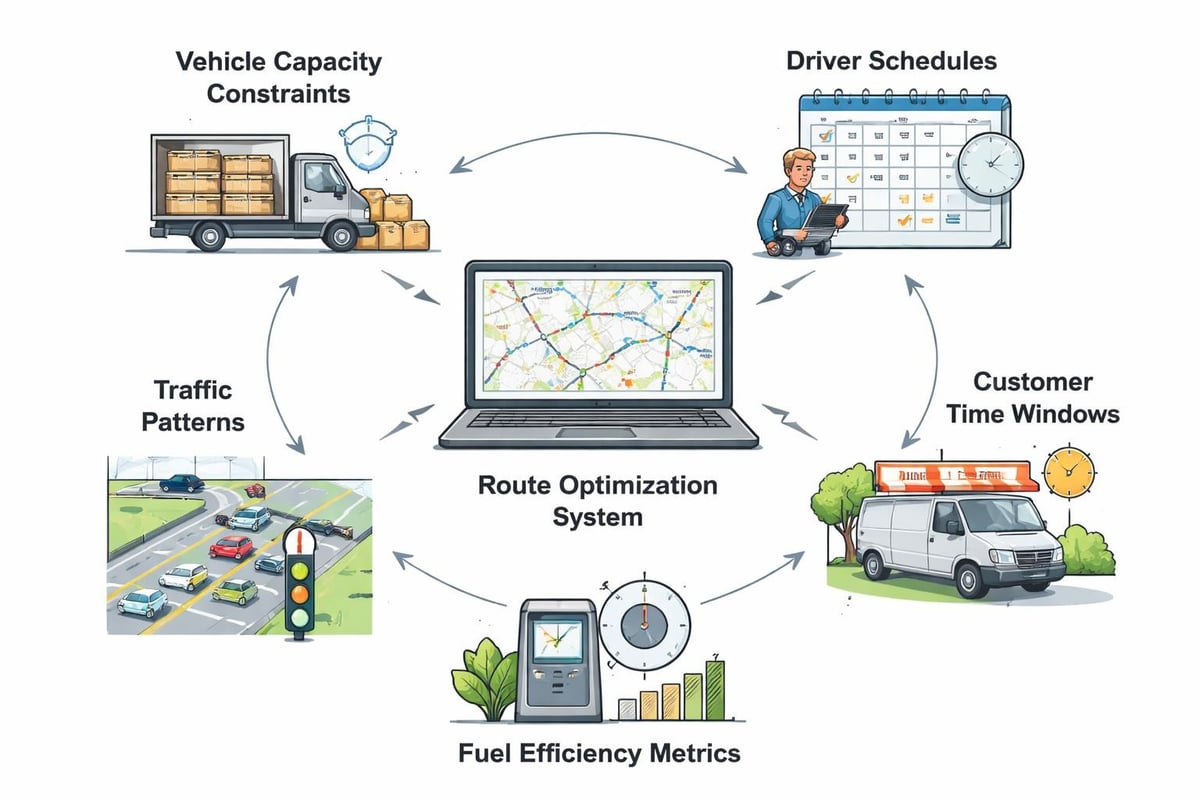

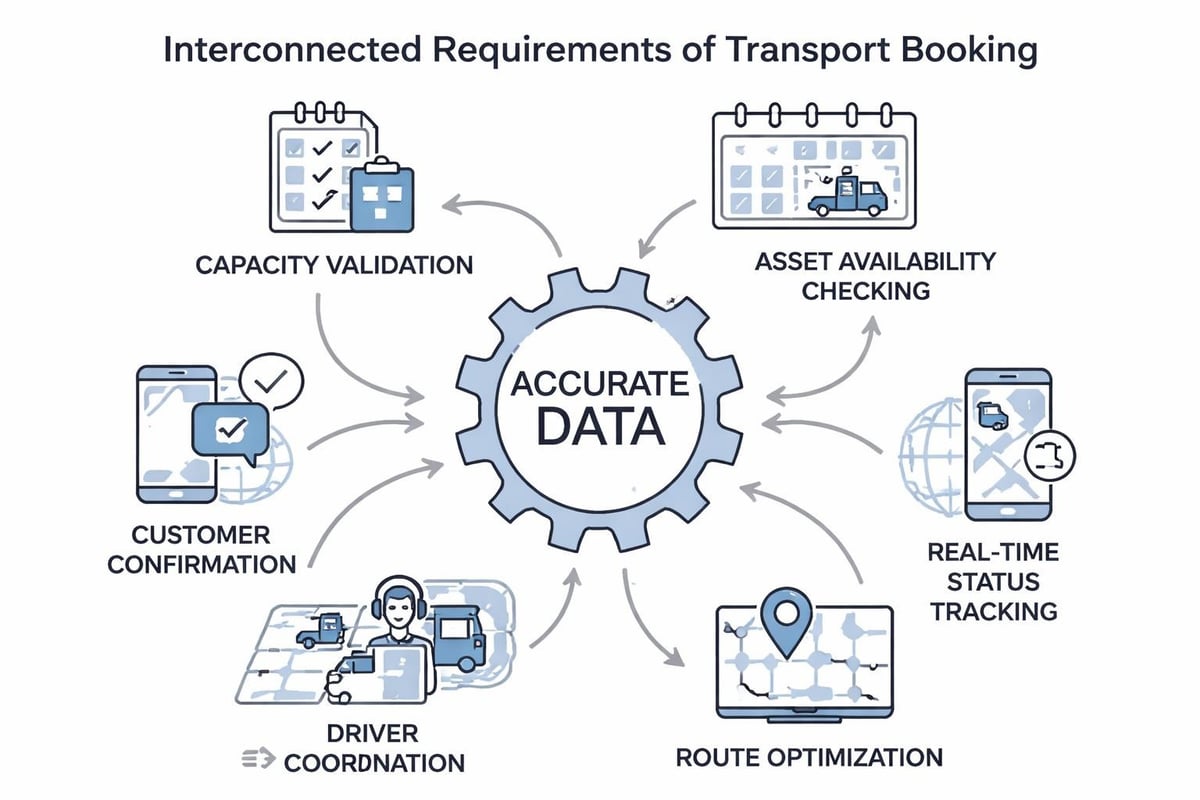

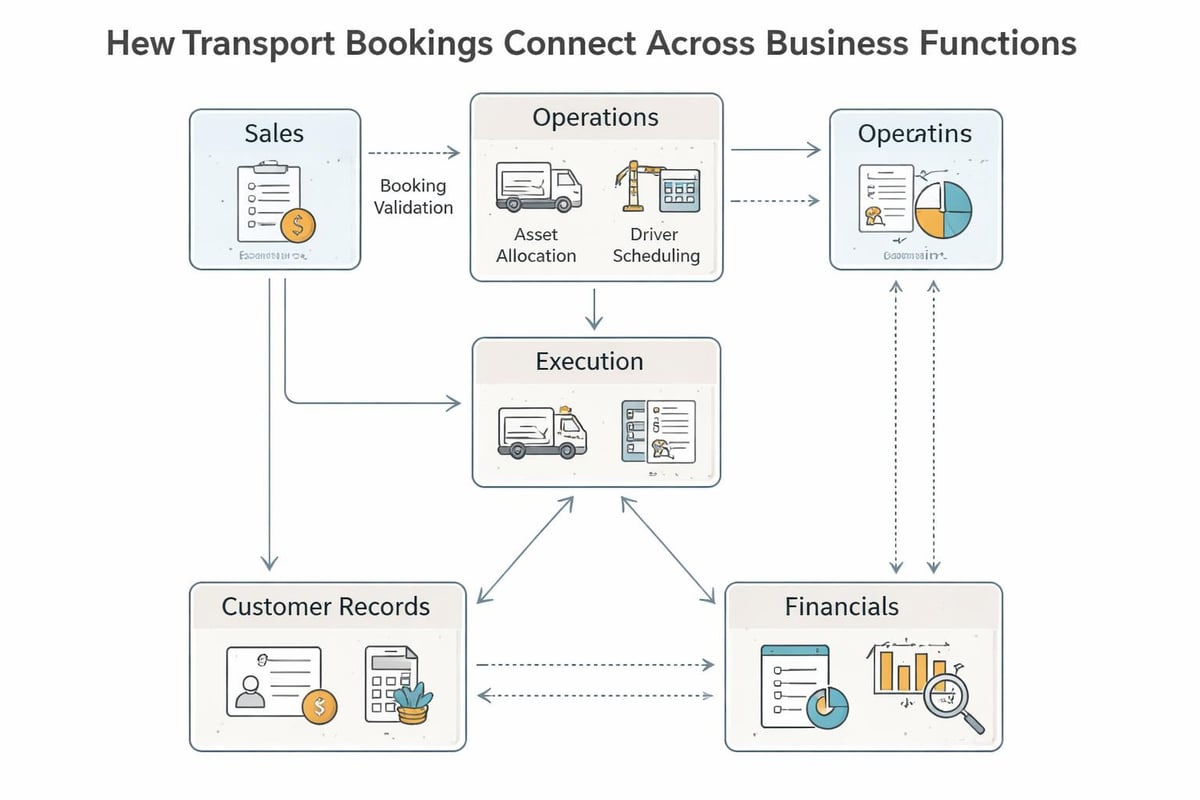

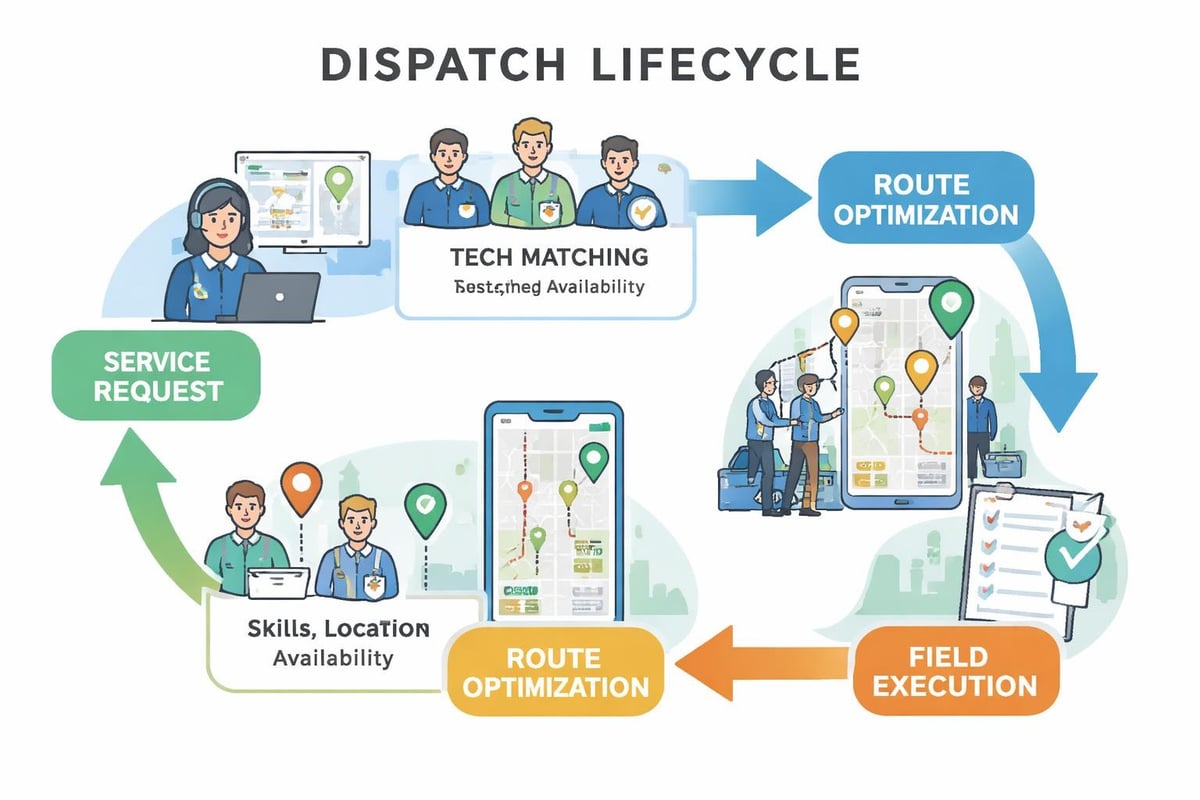

The promise of AI in operations fails when predictions cannot trigger actions. A demand forecast means nothing if it does not adjust procurement schedules. Route optimization provides no value if drivers never receive updated instructions. Customer churn predictions waste resources unless they activate retention workflows.

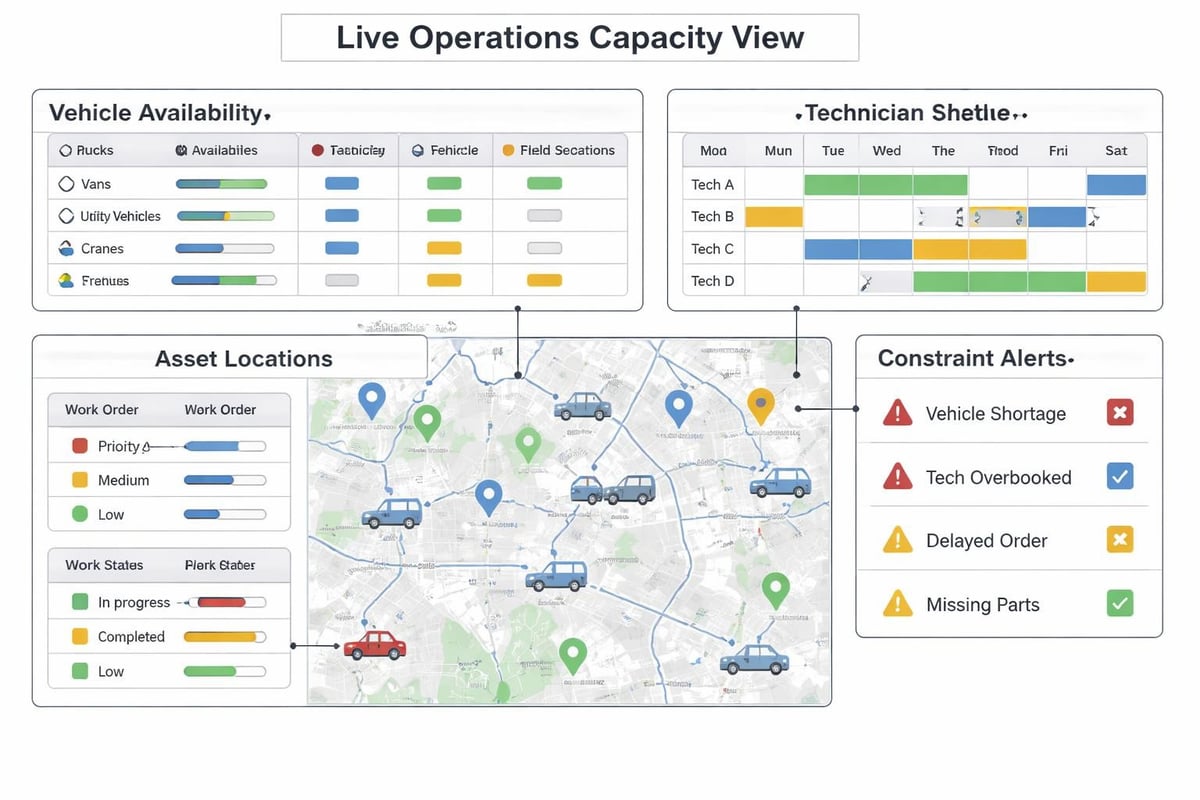

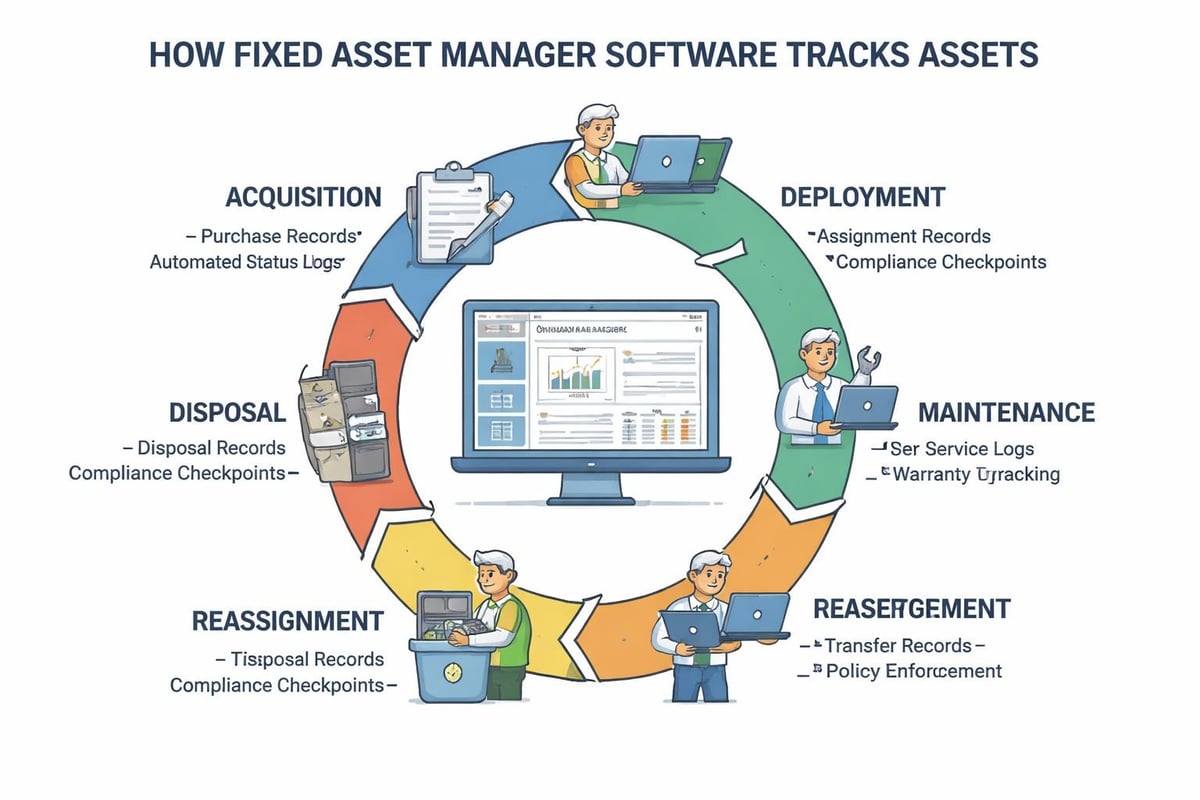

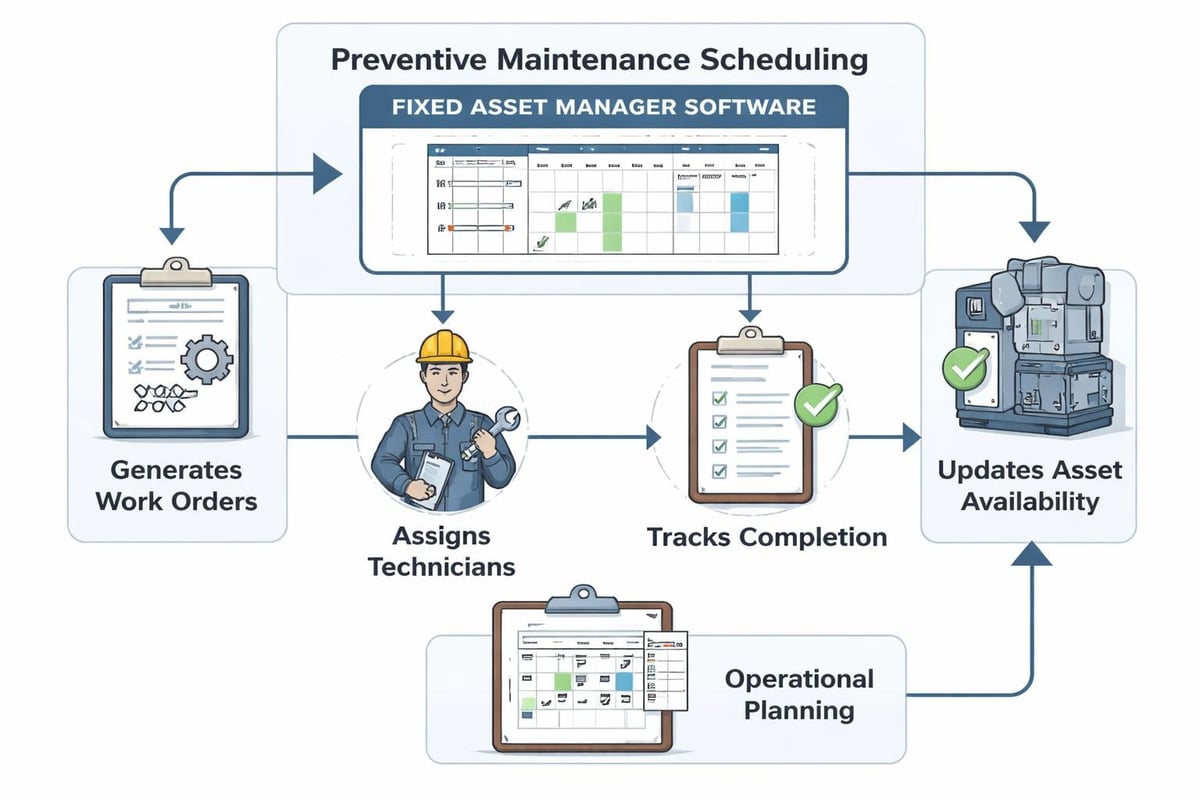

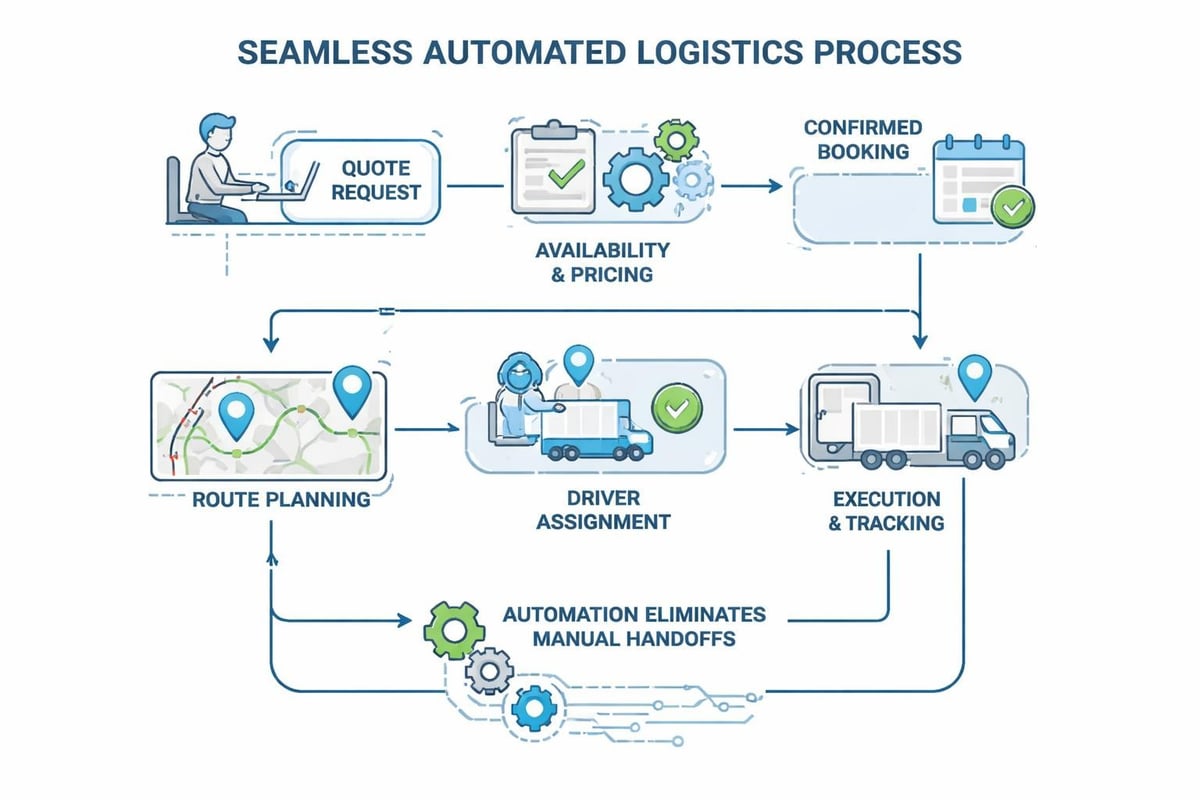

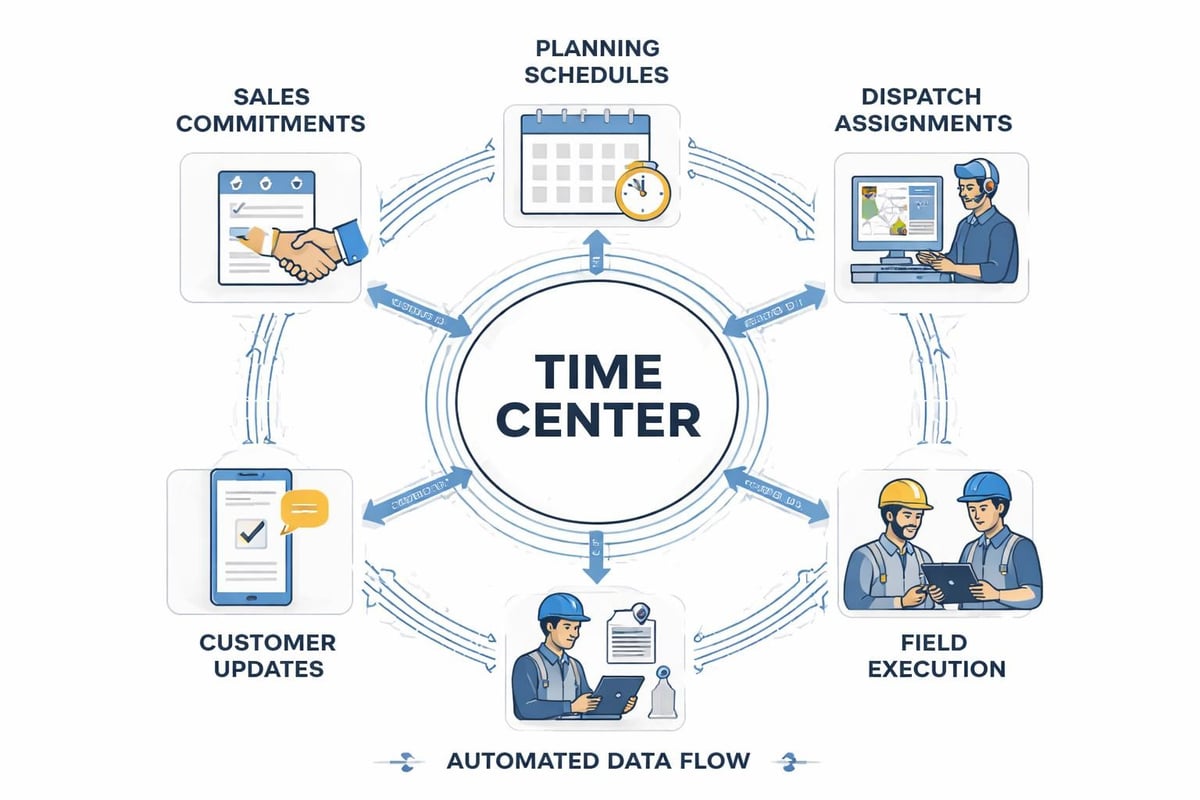

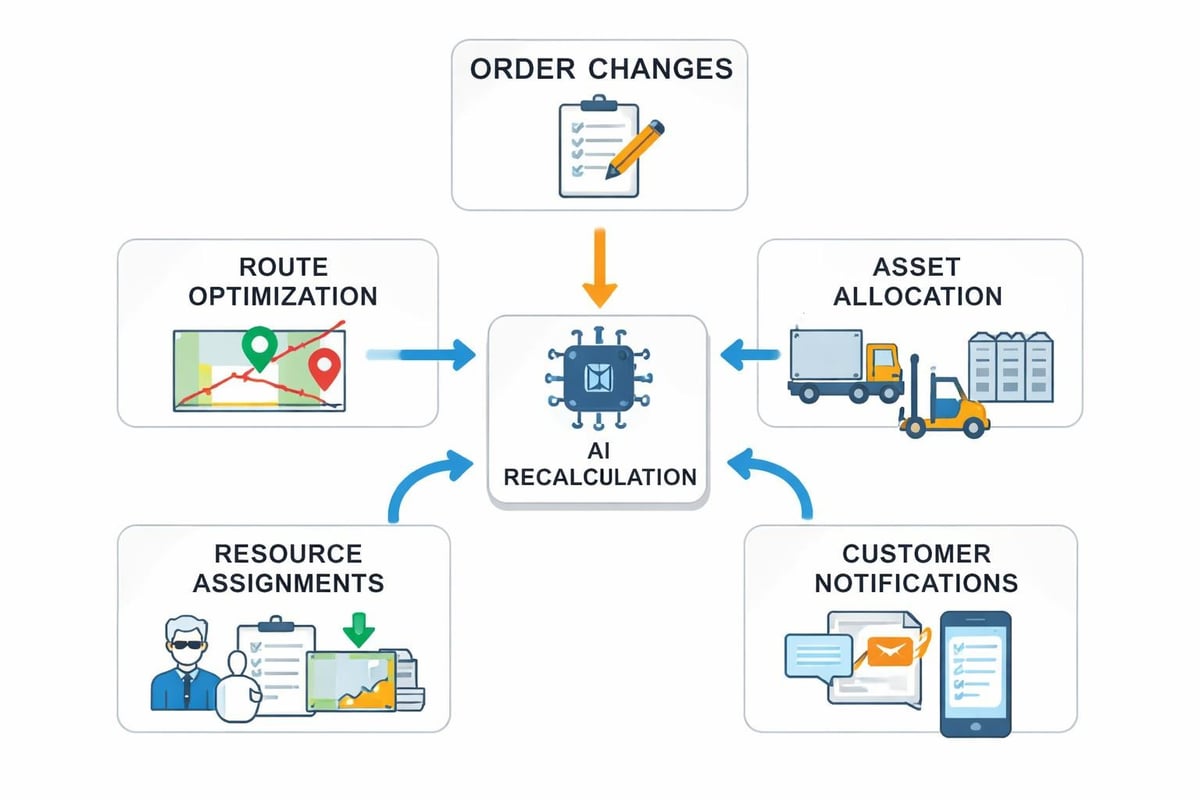

End to end ai in operational contexts means models connect directly to the systems that execute work. When a model predicts equipment failure, it automatically generates a maintenance work order, checks technician availability, orders replacement parts, and updates customer ETAs. The prediction becomes the trigger, not just information.

| Integration Point | Traditional Approach | End to End AI Approach |

|---|---|---|

| Demand forecasting | Weekly report emailed to planners | Automatic purchase orders and capacity allocation |

| Route optimization | Planner reviews suggestions manually | Routes sync to driver mobile apps in real-time |

| Quality prediction | Alerts sent to supervisor inbox | Inspection workflow triggered with priority scoring |

| Inventory optimization | Monthly stock review meetings | Dynamic reorder points adjusted continuously |

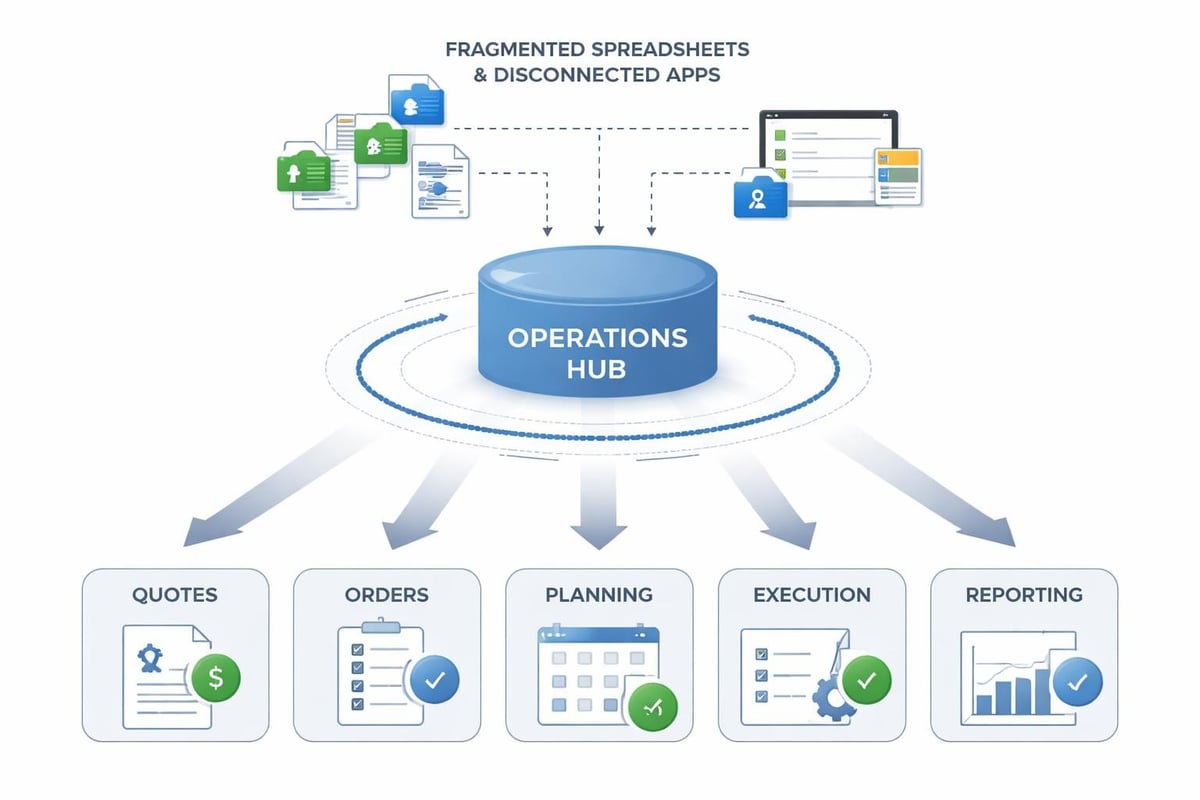

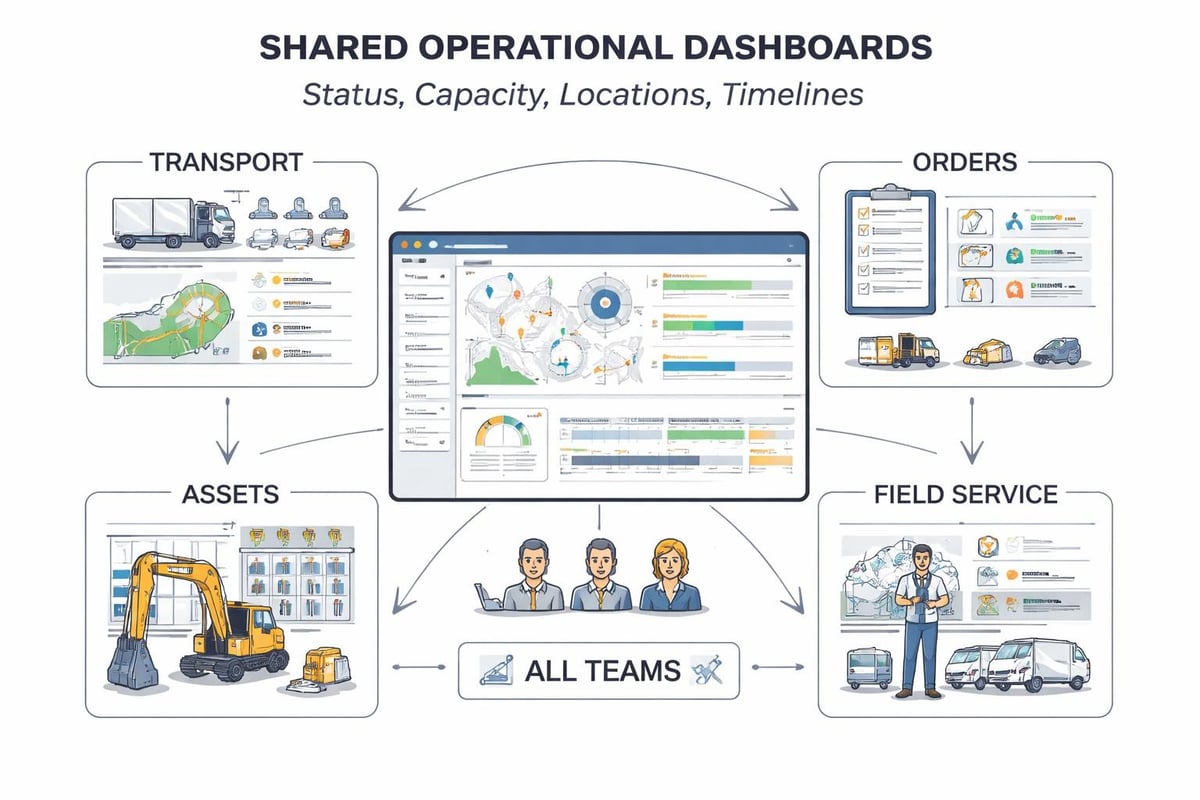

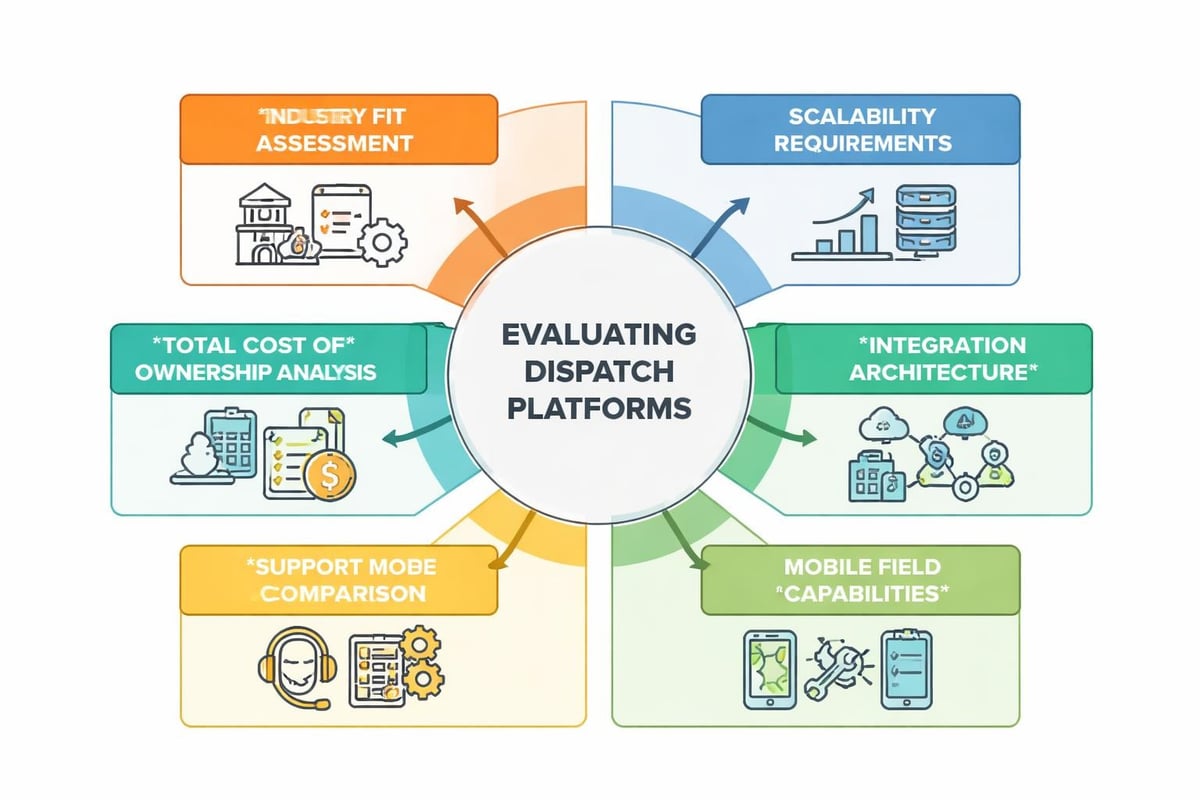

This level of integration requires operational platforms designed for automation rather than retrofitted with AI as an afterthought. The modular operations platform approach enables teams to connect AI capabilities directly to transport, orders, assets, and field service execution without rebuilding core systems.

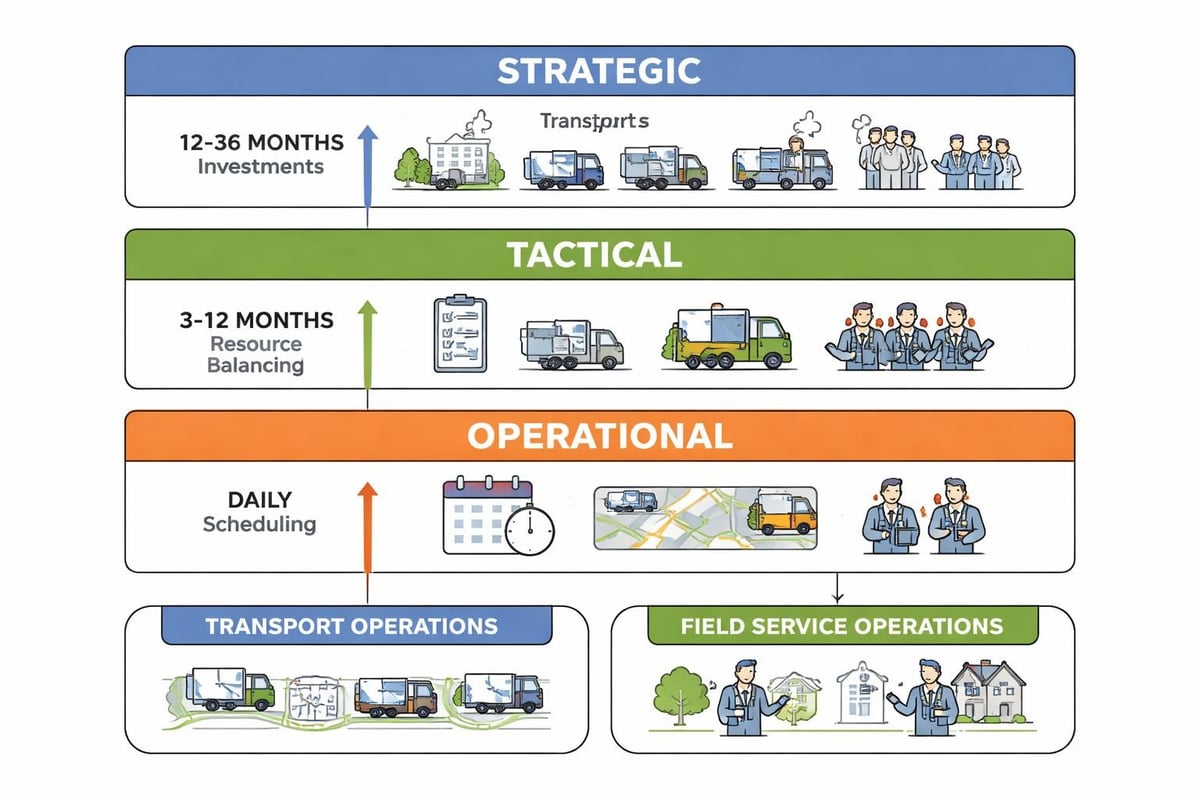

Connecting Planning to Execution

The gap between planning and execution destroys value in most organizations. Plans optimize for ideal conditions, but reality introduces delays, cancellations, equipment failures, and resource constraints. Static plans become obsolete within hours.

End to end ai closes this gap by continuously recalculating optimal actions as conditions change. When a delivery truck breaks down, the system immediately:

- Reassigns orders to available vehicles based on capacity and location

- Recalculates route sequences to minimize total delay

- Updates customer ETAs across all affected deliveries

- Generates exception reports for orders that cannot meet original commitments

- Triggers alerts to suppliers or partners if service levels are at risk

This requires AI models embedded in operational workflows, not separated from them. The system needs real-time data about vehicle locations, driver hours, order priorities, customer requirements, and traffic conditions. Models must run frequently enough to respond before circumstances change again.

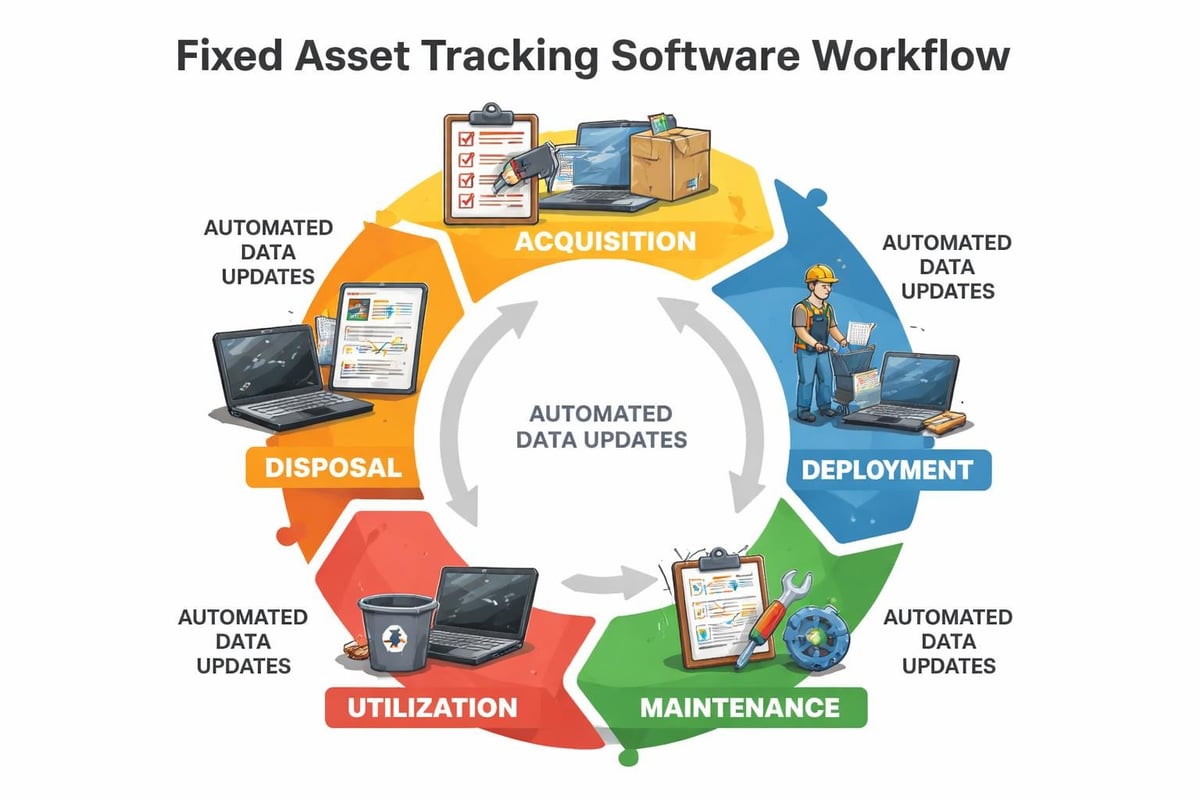

Data Flow and Model Lifecycle Management

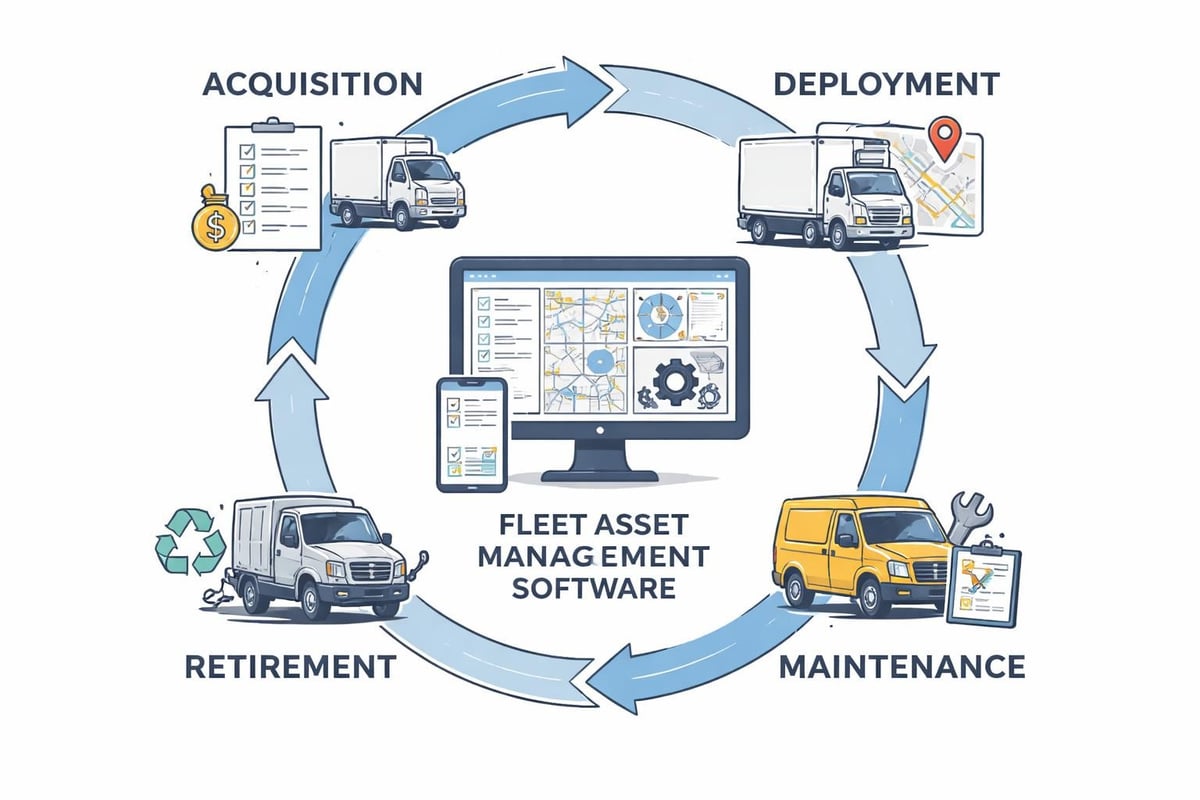

Sustainable end to end ai depends on managing the complete model lifecycle as a production process, not a research project. Models degrade over time as patterns shift, new product lines launch, customer behavior evolves, and market conditions change. Without systematic retraining, yesterday's accurate predictions become today's costly errors.

The lifecycle begins with labeled training data extracted from historical operations. Quality labels matter more than sophisticated algorithms: if outcomes are mislabeled, errors recorded inconsistently, or edge cases excluded, models learn the wrong patterns. Operations teams must validate that training data represents reality, including exceptions and rare events that matter most.

Continuous Learning Frameworks

Static models trained once and deployed forever fail in dynamic business environments. End to end ai implements continuous learning where:

- New operational data automatically flows into training pipelines

- Model performance metrics track accuracy against live outcomes

- Automated retraining triggers when drift exceeds thresholds

- A/B testing validates new model versions before full deployment

- Rollback mechanisms restore previous versions if performance degrades

The EndToEndML pipeline approach demonstrates how automated workflows can handle preprocessing, training, evaluation, and deployment with minimal manual intervention. This reduces the specialized expertise required while maintaining rigor in model development.

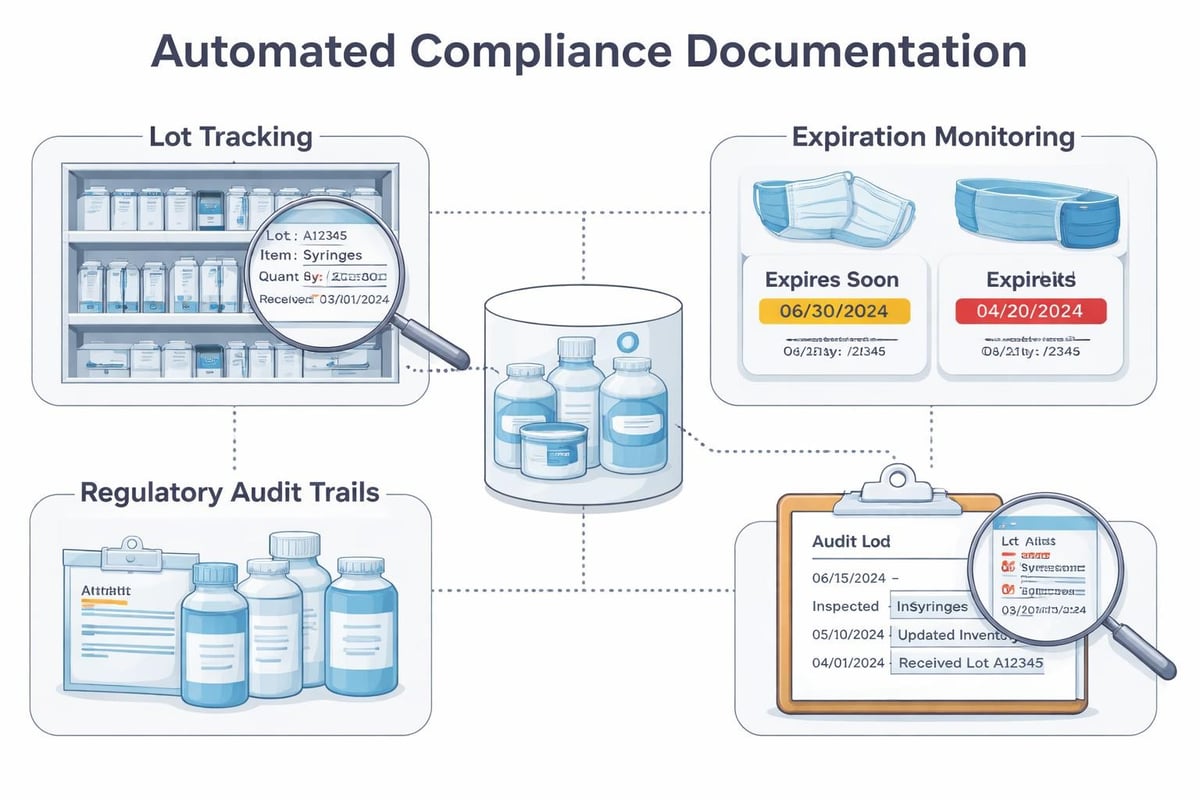

Production AI systems must handle model versioning, experiment tracking, and reproducibility. When a model makes a critical business decision, teams need to reconstruct exactly which version ran, what data it used, and why it produced that output. Verifiable AI pipelines using cryptographic methods address these transparency and trust requirements in regulated industries.

Operational Challenges and Practical Solutions

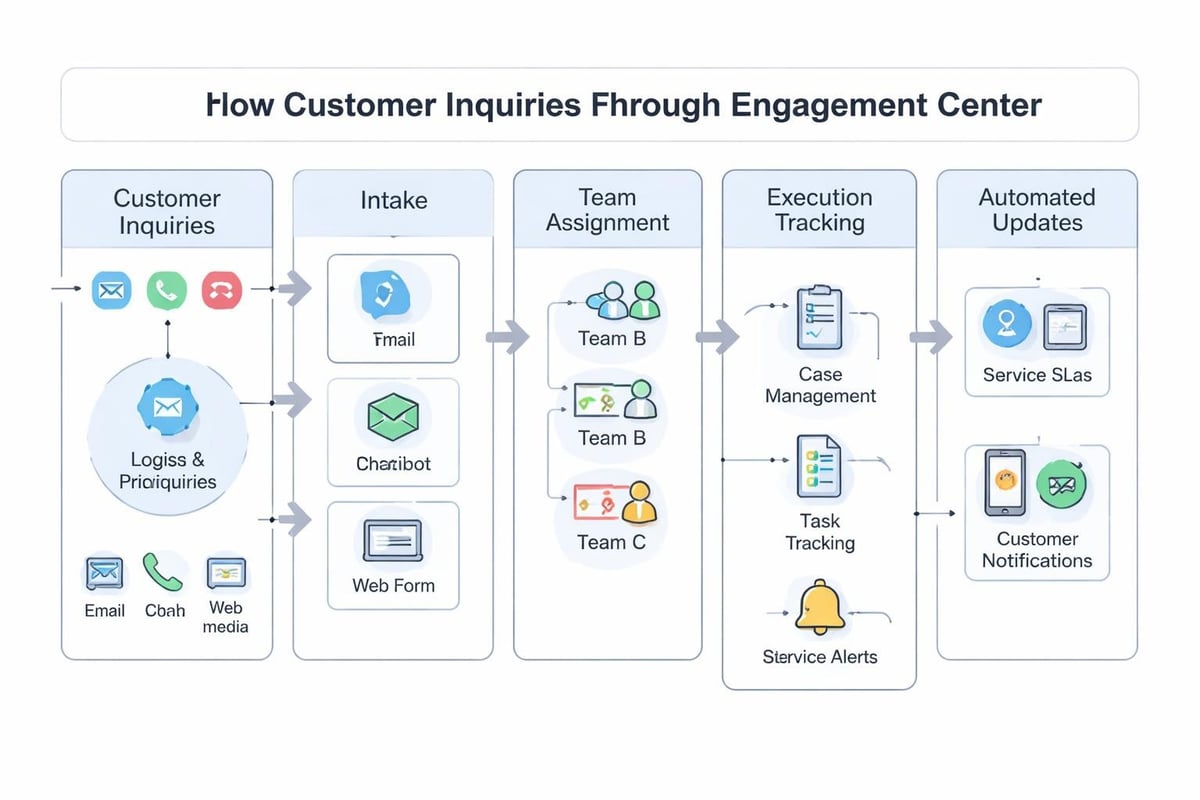

Organizations struggle with end to end ai not because the technology fails, but because implementation crosses organizational boundaries. Data scientists report to analytics teams, IT owns infrastructure, operations controls execution systems, and business units define requirements. No single group has authority or visibility across the complete flow.

Breaking Down Organizational Silos

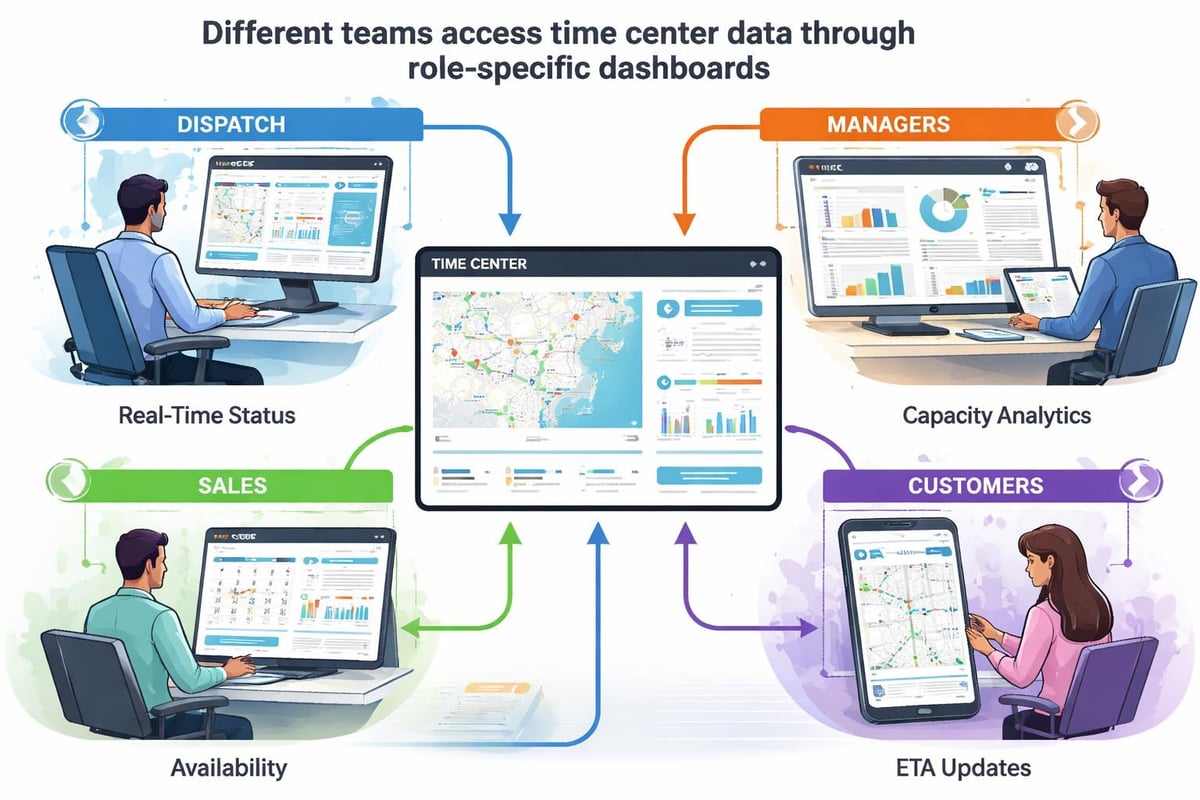

Successful end to end ai requires cross-functional ownership where teams share accountability for outcomes, not just their component pieces. This means:

- Shared metrics that measure business impact, not just model accuracy or system uptime

- Integrated tooling where data teams see operational constraints and operations teams understand model capabilities

- Unified platforms that connect planning, execution, and learning in one environment

- Clear handoff protocols defining how models transition from development to production

- Incident response processes that address both technical failures and business exceptions

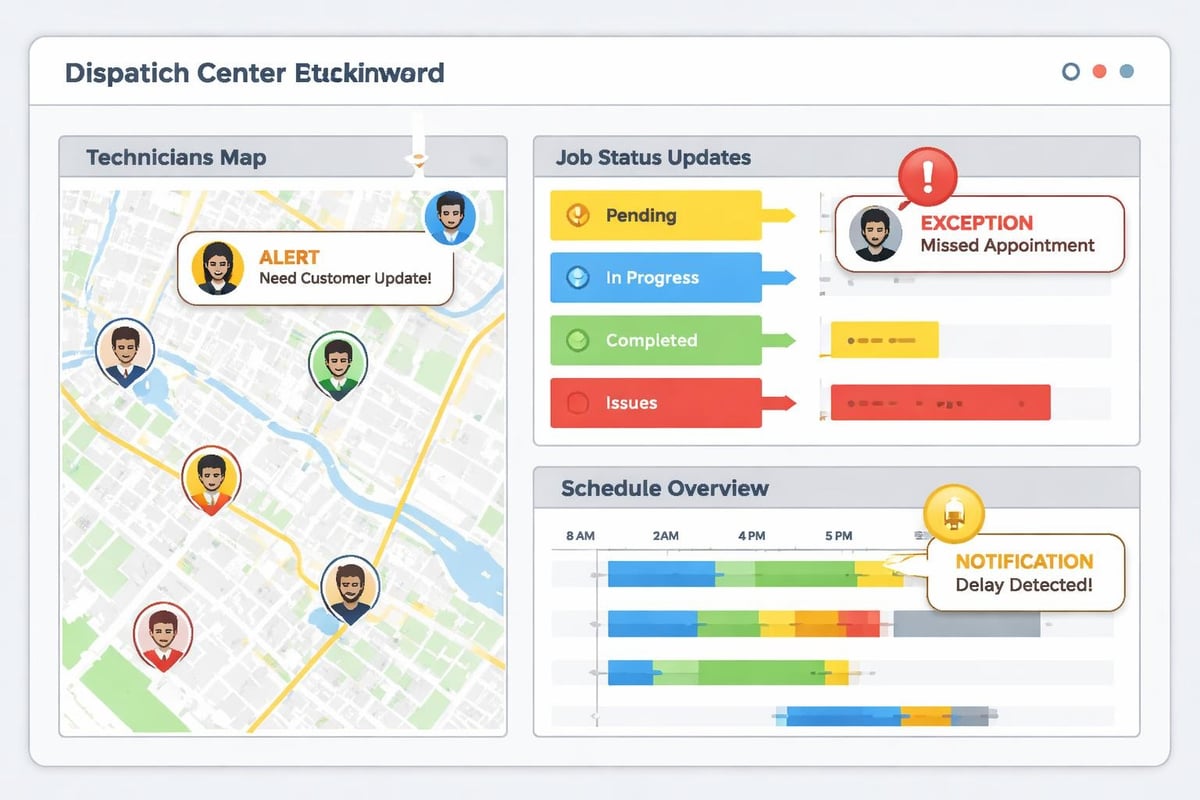

Operations platforms designed for transport, logistics, field service, and rental businesses can accelerate this integration by providing a shared command layer where AI automation connects naturally to daily execution. When the platform already unifies orders, assets, routes, and resources, embedding AI becomes configuration rather than custom development.

Scaling AI Across Multi-Site Operations

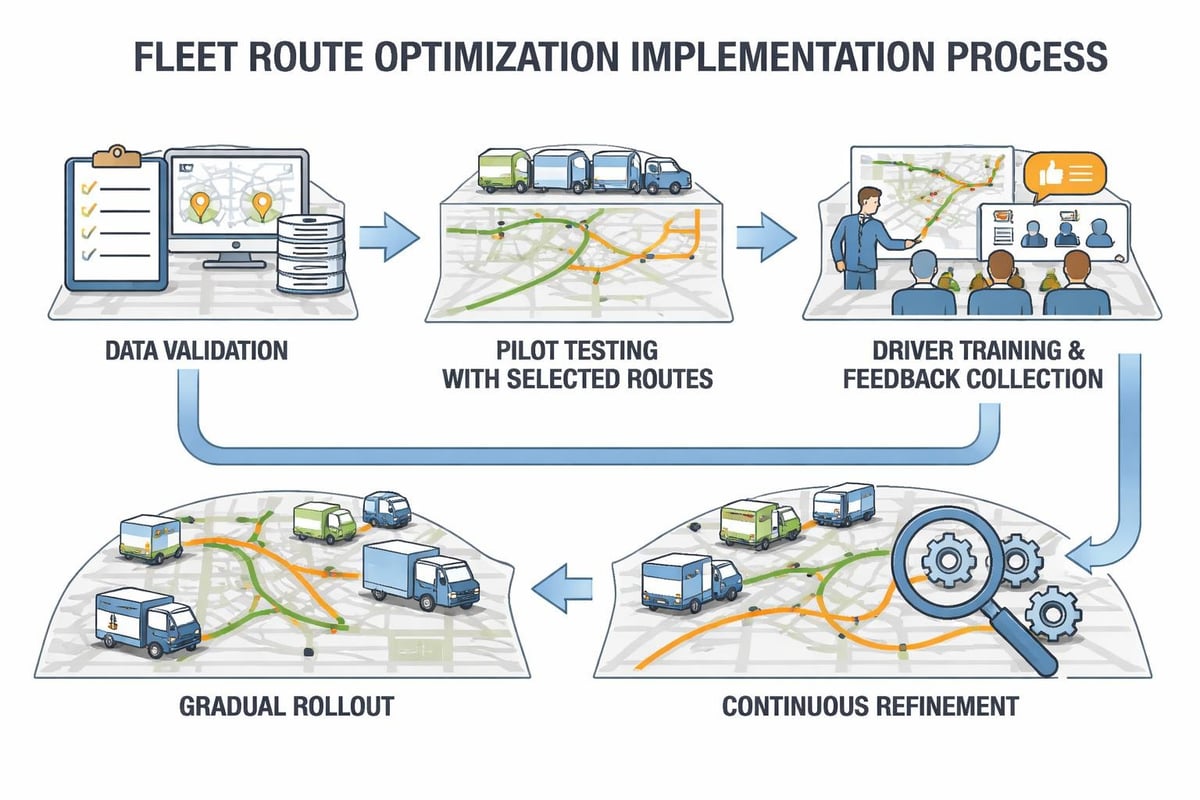

Single-location AI pilots often succeed while enterprise rollouts stumble. The complexity multiplies with geography, regulatory requirements, language differences, local operational practices, and varying data availability. End to end ai at scale requires architecture that handles this heterogeneity without fragmenting back into disconnected point solutions.

Centralized models assume uniform processes and consistent data quality across all sites. This rarely matches reality. Regional differences in customer behavior, seasonal patterns, supplier networks, and competitive dynamics mean a model optimized for one location performs poorly elsewhere.

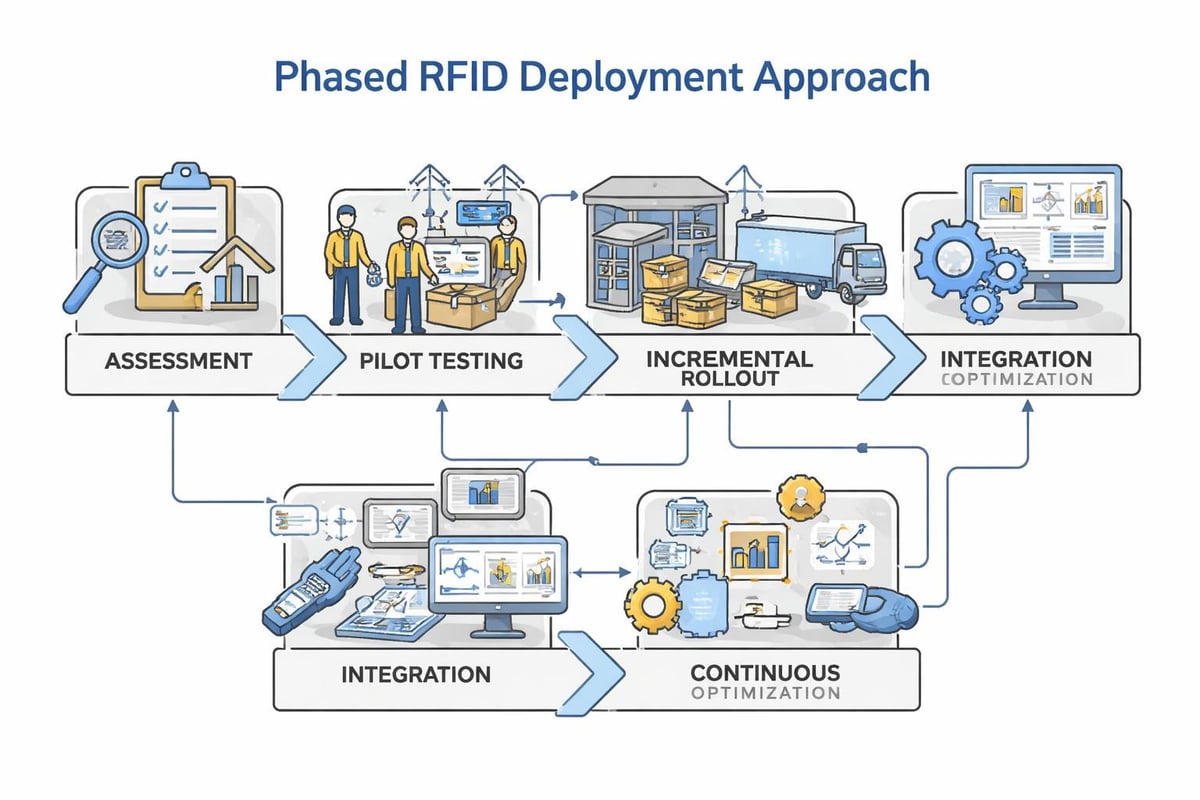

Federated approaches balance central control with local adaptation:

- Core models train on aggregated cross-site data to learn general patterns

- Site-specific fine-tuning adapts to local conditions without rebuilding from scratch

- Shared feature engineering ensures consistent data preparation

- Central monitoring detects when local drift indicates systemic changes requiring core model updates

| Scaling Challenge | Symptom | End to End AI Solution |

|---|---|---|

| Inconsistent data formats | Model fails when deployed to new sites | Centralized schema enforcement with local adapters |

| Regional operational differences | Predictions accurate at pilot site but wrong elsewhere | Federated training with site-level fine-tuning |

| Varying deployment infrastructure | Models run in cloud but sites lack connectivity | Edge deployment with offline operation and sync |

| Local compliance requirements | Data cannot leave jurisdiction | On-premise training with encrypted central aggregation |

For multi-site operations, AI automation must respect the autonomy field teams need while maintaining enterprise visibility. The Transport Command Center demonstrates this balance by providing real-time control that unifies orders, assets, routes, and resources while enabling local dispatch teams to adapt to immediate conditions. AI routing and automated dispatch workflows operate within constraints defined by both central policy and local knowledge.

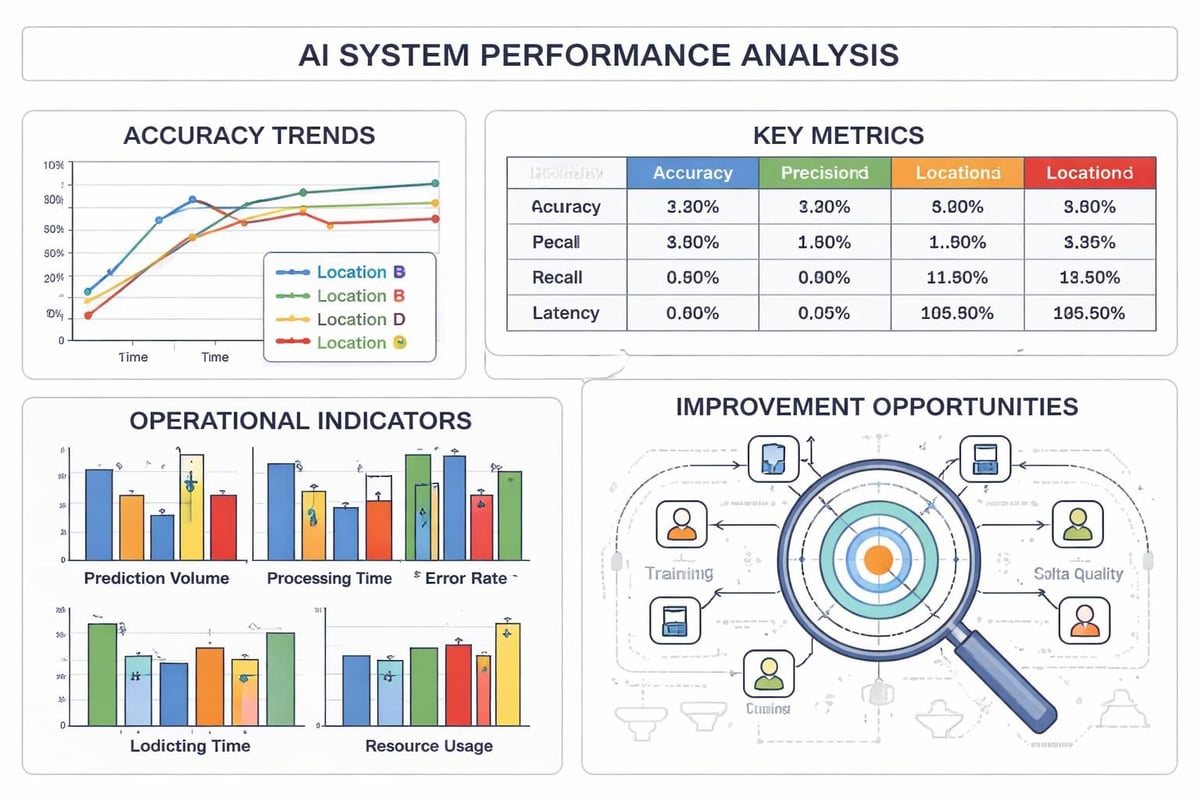

Managing Model Performance Across Locations

When the same model version runs across dozens of sites, performance variation reveals hidden operational differences. One location consistently achieves 95% forecast accuracy while another struggles at 75%. The gap indicates process differences, data quality issues, or market conditions that the model does not capture.

End to end ai treats these variations as learning opportunities rather than failures. Systematic comparison identifies which local practices improve outcomes and which create blind spots. High-performing sites provide templates for improvement. Persistent underperformance signals where local conditions require specialized models or where operational changes would deliver more value than algorithm refinement.

Building Toward Autonomous Operations

The ultimate goal of end to end ai extends beyond automation to autonomy: systems that sense changing conditions, decide appropriate responses, execute actions, and learn from outcomes with minimal human intervention. This does not eliminate human oversight but shifts it from routine decisions to exception handling and strategic guidance.

Autonomous operations require trust built through demonstrated reliability. Teams will not delegate critical decisions to AI that fails unpredictably or makes choices they cannot explain. The path to autonomy follows a progression:

- AI recommendations that humans review and approve before execution

- Automated execution for routine scenarios with human monitoring

- Autonomous operation within defined boundaries with exception escalation

- Continuous expansion of autonomous boundaries as reliability proves consistent

This progression respects the end-to-end principle in system design, which suggests that application-specific intelligence belongs at the endpoints where context and accountability reside. AI should augment human judgment in complex scenarios rather than replace it entirely.

The Role of Explainability and Trust

Black-box predictions create accountability gaps that prevent operational teams from trusting AI decisions. When a model recommends canceling a customer order, denying a service request, or reallocating resources, the team executing that decision needs to understand why. Unexplained recommendations get ignored or overridden, wasting AI investment.

End to end ai must include explainability throughout the pipeline:

- Feature importance showing which inputs most influenced each prediction

- Decision paths tracing how the model reached specific conclusions

- Confidence scores indicating prediction reliability

- Comparison cases highlighting similar historical scenarios and outcomes

- Override mechanisms allowing human judgment while capturing feedback for future training

Modern approaches to end-to-end learning emphasize training single models from raw input to final output without intermediate steps. While this can improve accuracy by avoiding information loss at handoff points, it often reduces interpretability. Operational AI systems must balance end-to-end optimization with the explainability required for business adoption.

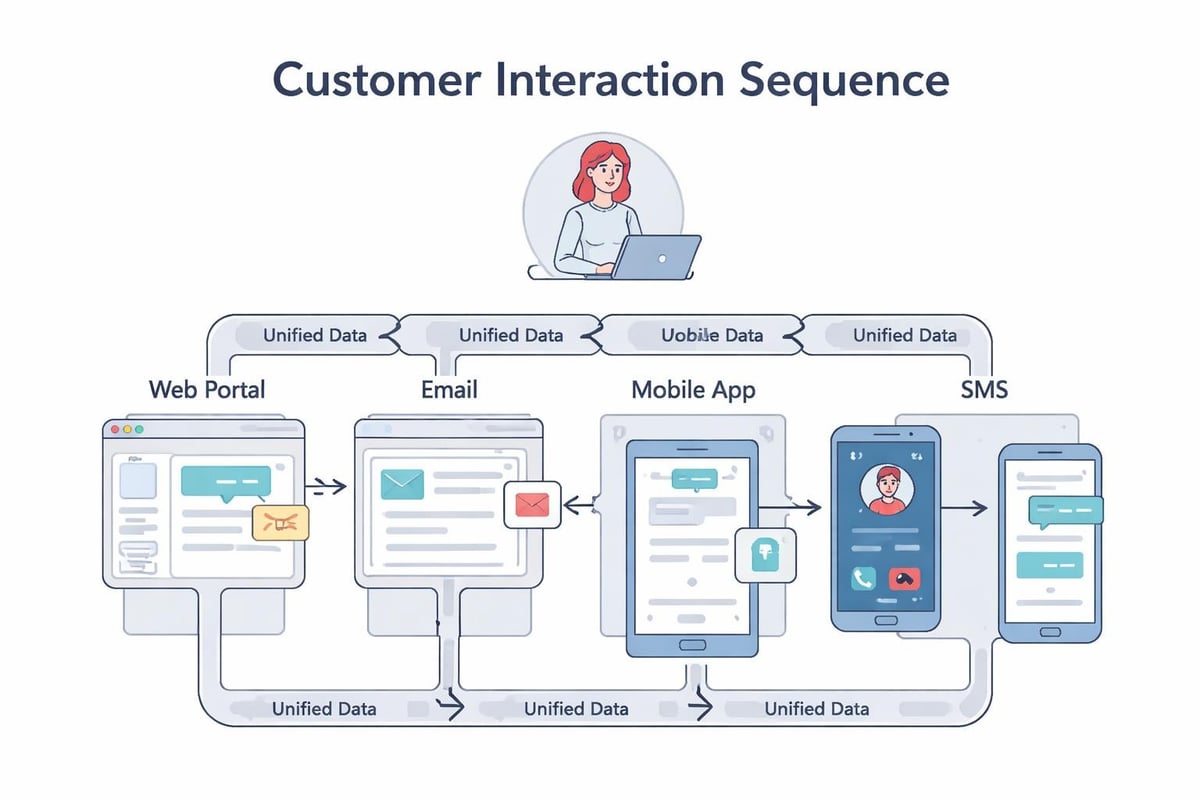

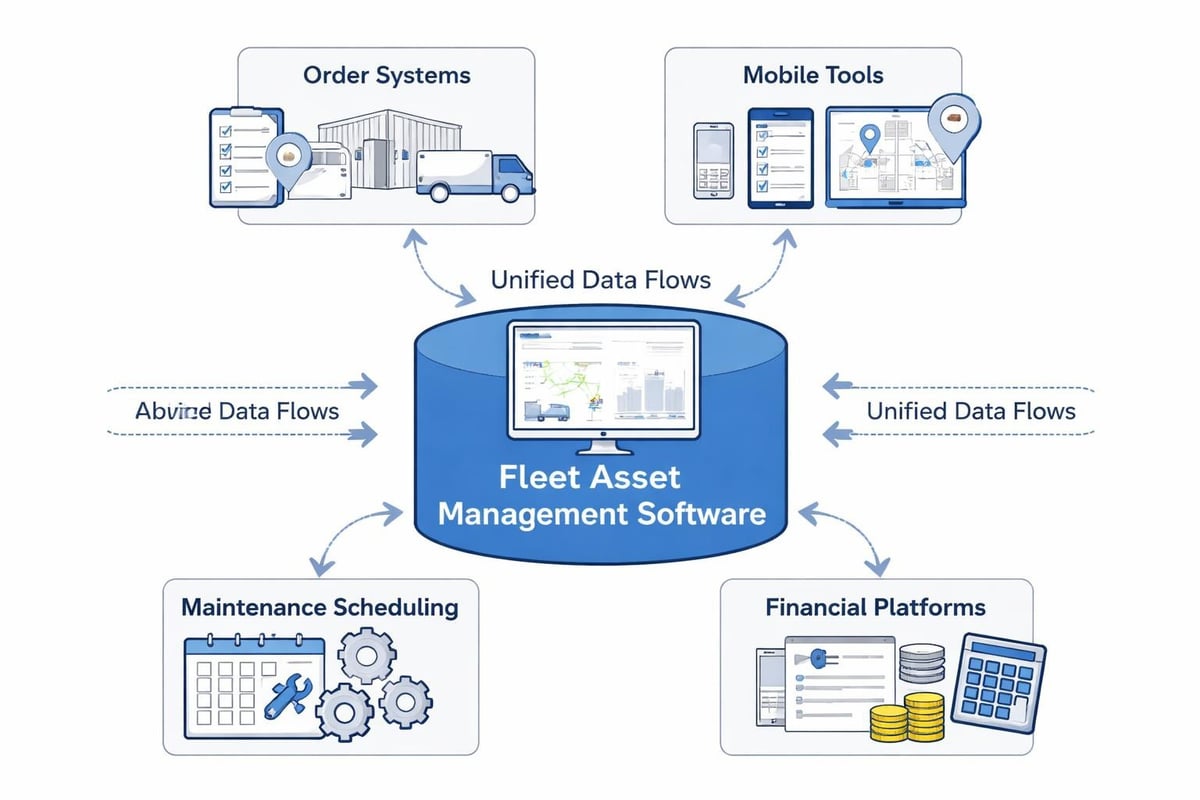

Integration with Existing Systems

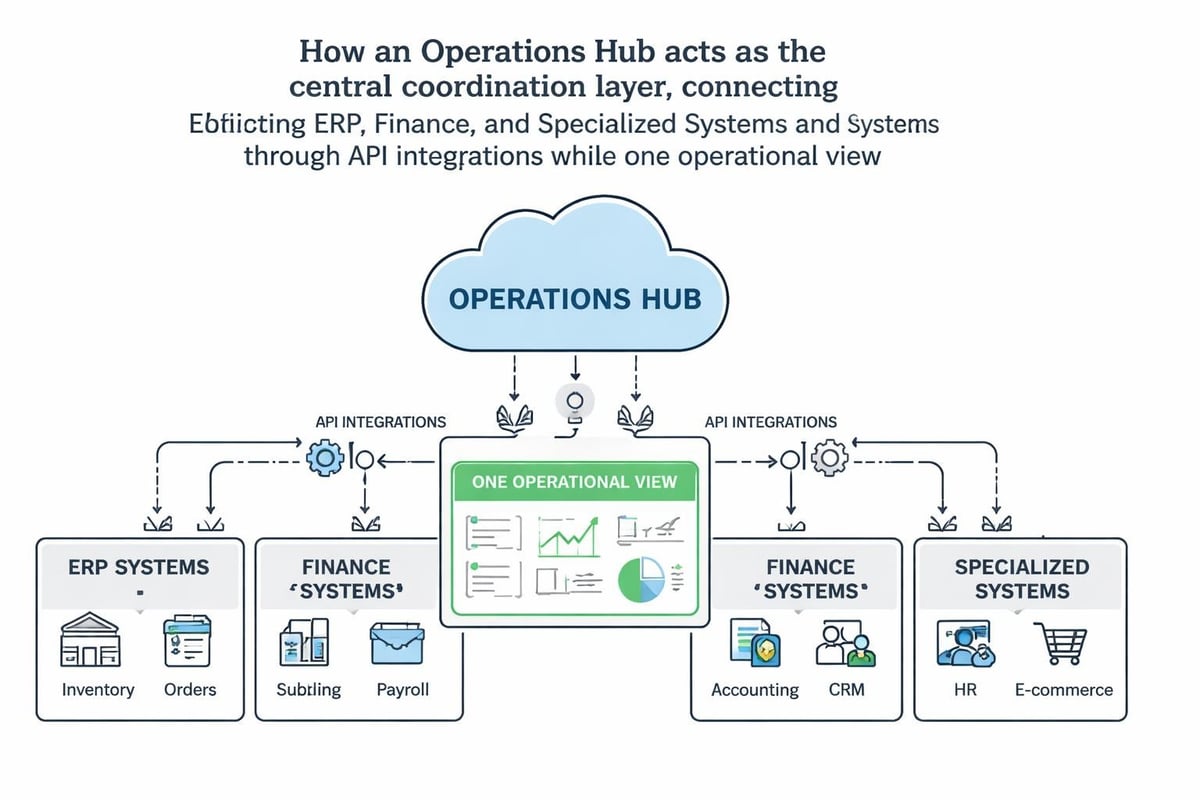

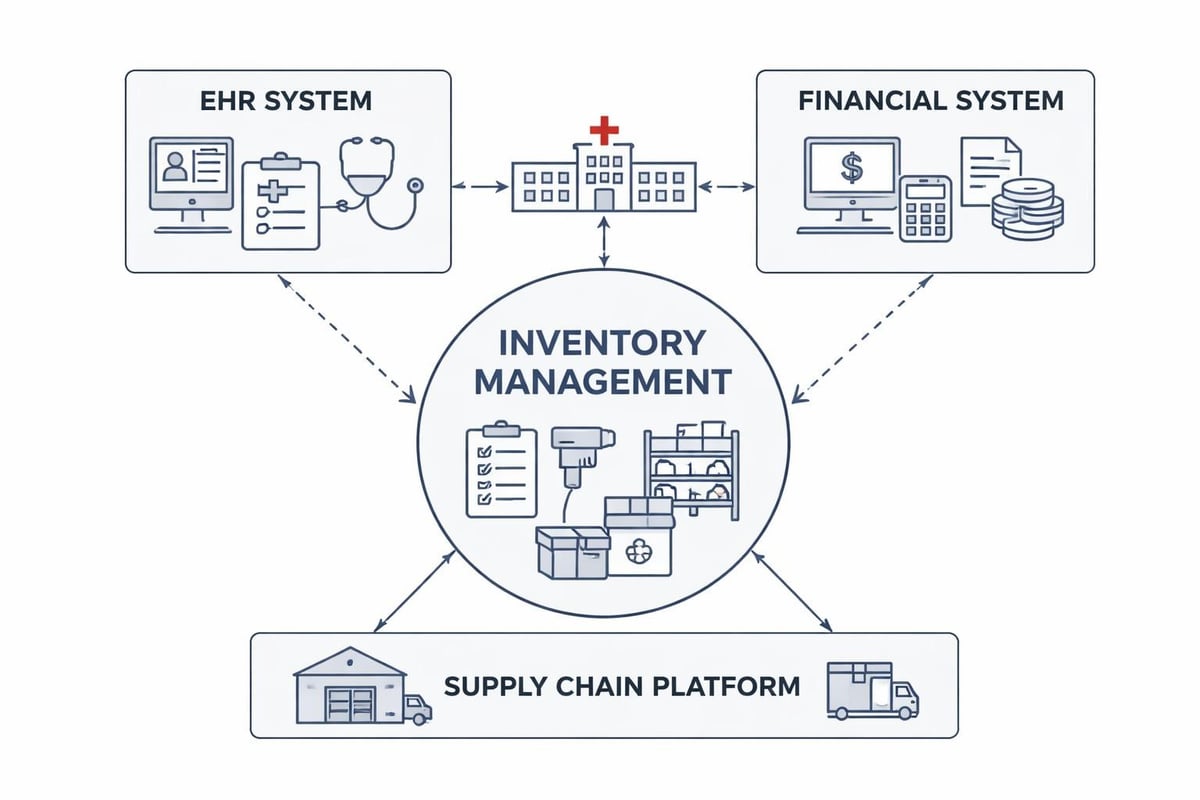

Most organizations cannot replace their entire technology stack to adopt end to end ai. Legacy ERP systems, industry-specific software, compliance tools, and partner integrations represent millions in investment and years of process refinement. Practical AI implementation must work with existing infrastructure, not require abandoning it.

API-First Architecture for AI Integration

Modern operations platforms provide API connectivity that enables AI models to access data from legacy systems and trigger actions without direct integration. This approach:

- Preserves existing financial and compliance systems of record

- Allows gradual migration as AI proves value in specific workflows

- Reduces implementation risk by avoiding big-bang replacements

- Enables best-of-breed choices rather than forcing single-vendor lock-in

The Neovara technology approach demonstrates how API integrations can connect operational AI to ERP and finance systems while maintaining a unified command layer for daily execution. Financial transactions flow to the ERP while operational decisions happen in real-time where work occurs.

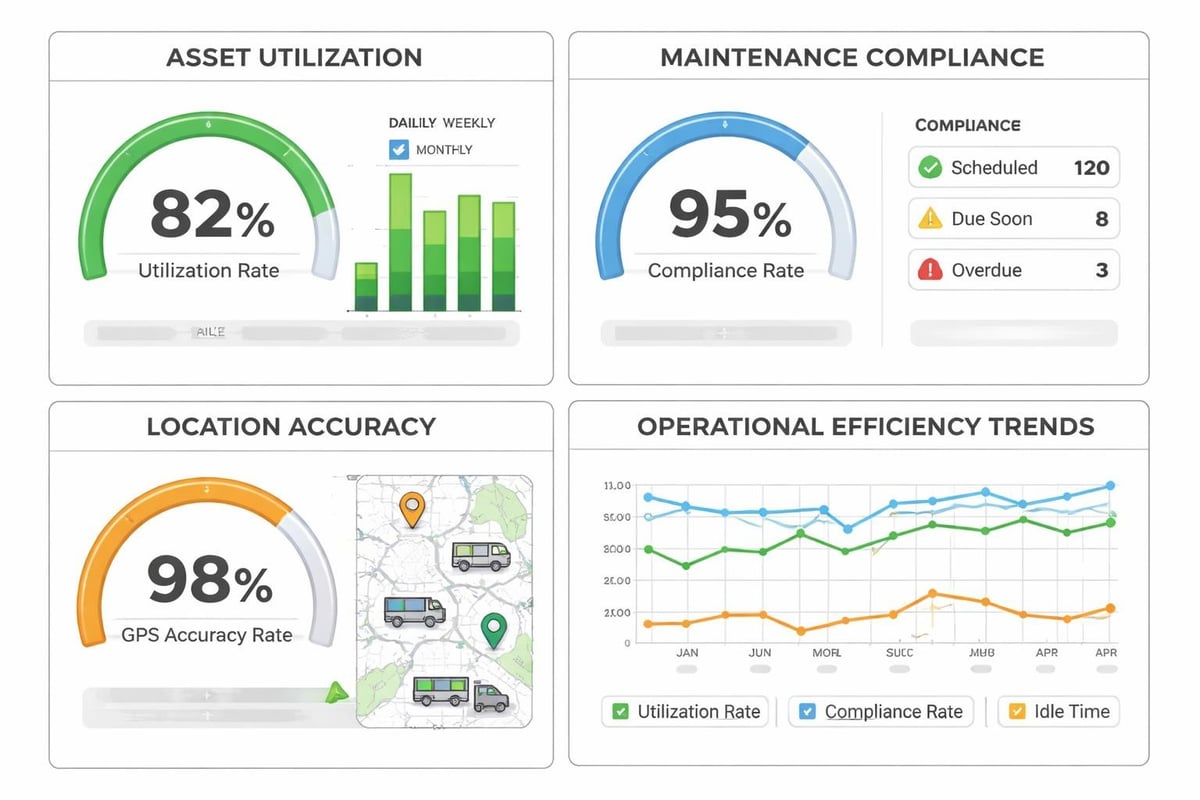

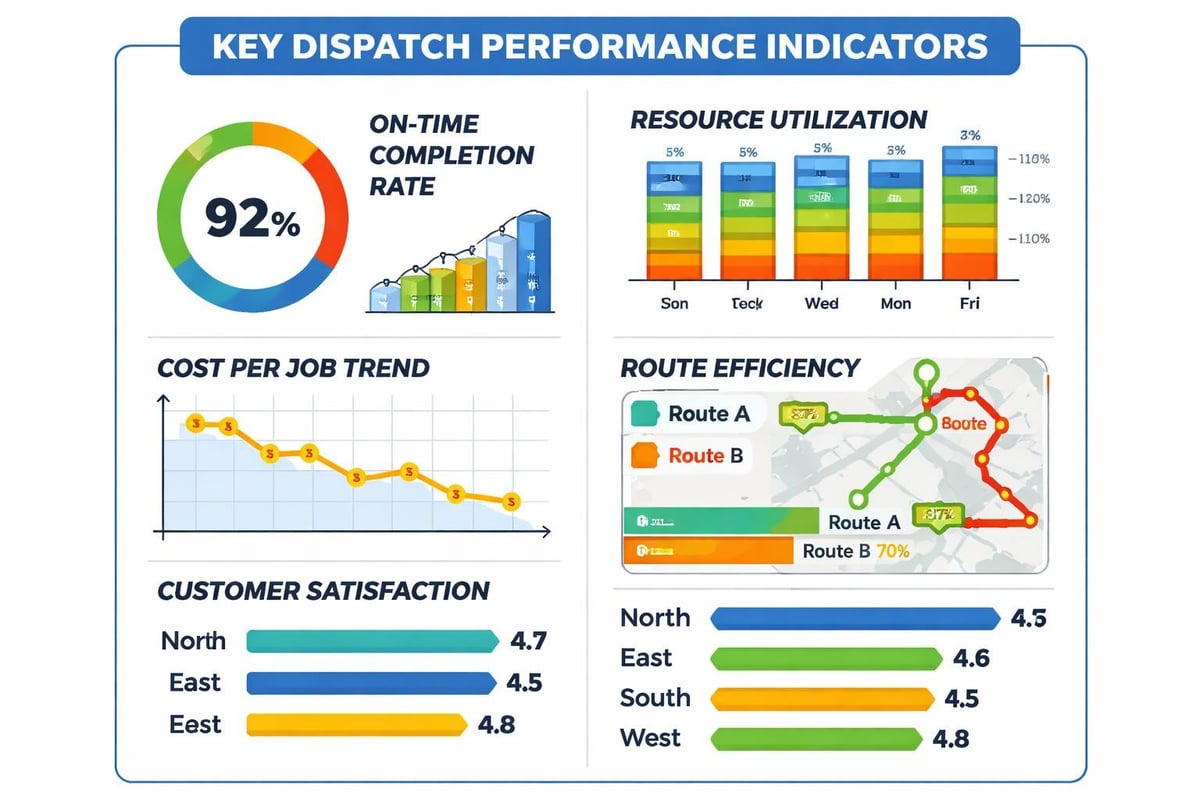

Measuring Business Impact Beyond Model Metrics

Data science teams naturally focus on metrics like accuracy, precision, recall, and F1 scores. These matter for model development but tell an incomplete story about business value. A 95% accurate model that reduces operational costs by 2% delivers less value than an 85% accurate model that cuts costs by 15%.

End to end ai requires business metrics that connect model performance to operational outcomes:

| Model Metric | Operational Translation | Business Impact |

|---|---|---|

| Demand forecast MAE | Stockout rate and excess inventory days | Working capital reduction and revenue capture |

| Route optimization gap | Average delivery time and fuel cost per order | Customer satisfaction and operating margin |

| Equipment failure prediction recall | Unplanned downtime hours and emergency repair costs | Service level achievement and maintenance spend |

| Churn prediction precision | Retention campaign ROI and customer lifetime value | Revenue retention and acquisition cost offset |

These translations require collaboration between data teams and operations leaders. Models should optimize for business outcomes, not statistical perfection. In some scenarios, a simpler model that operations teams understand and trust delivers better results than a sophisticated algorithm that people work around.

Research on guideline-driven AI deployment highlights how automating the connection between model outputs and business processes reduces human intervention while improving scalability. When deployment follows clear rules about which predictions trigger which actions, the system becomes more consistent and measurable.

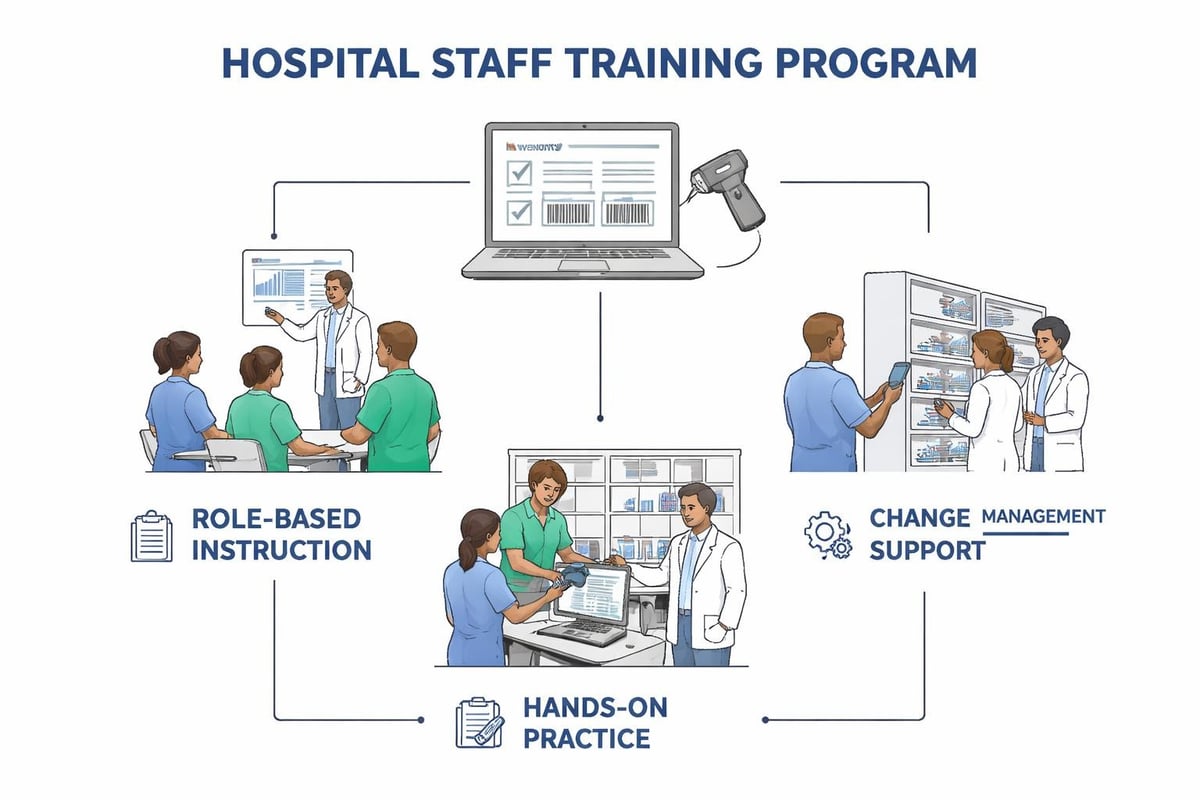

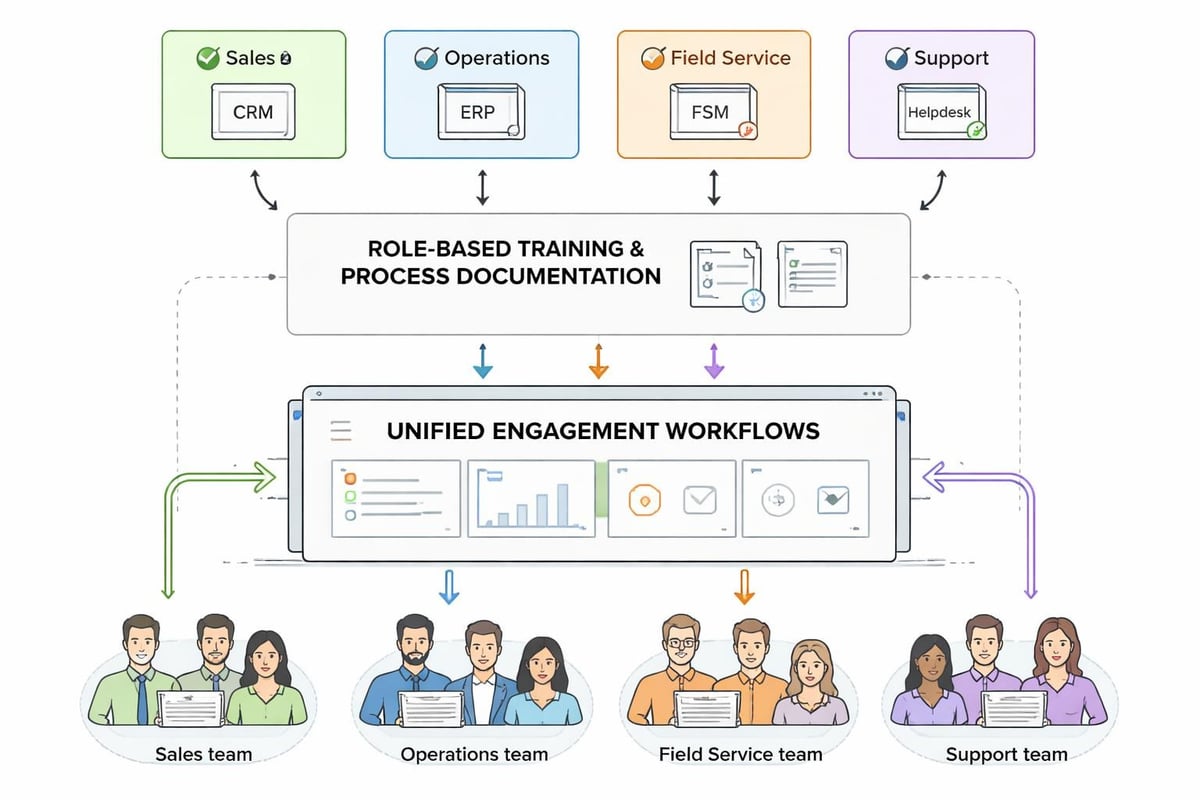

Training and Change Management

End to end ai changes how people work. Planners who once spent hours building schedules review AI-generated plans and handle exceptions. Customer service teams shift from answering status questions to resolving complex issues that AI cannot handle. Field teams receive dynamic assignments rather than static daily routes.

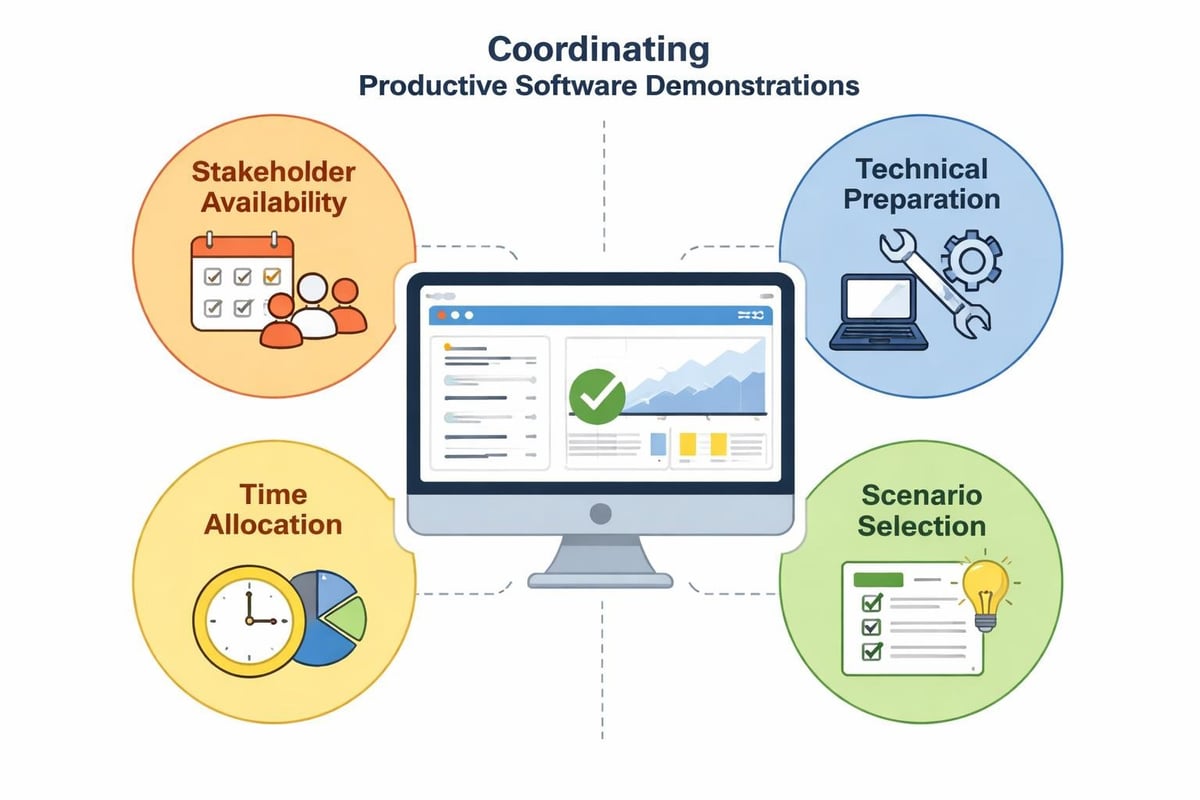

This transition requires more than technical training. Teams need to understand:

- How the AI works at a conceptual level without requiring data science expertise

- What decisions the AI makes autonomously and which require human approval

- How to recognize when AI recommendations need override and how to provide feedback

- What success looks like in the new workflow and how individual performance is measured

- Who to contact when AI behavior seems incorrect or produces unexpected results

Organizations underestimate this change management challenge and pay with low adoption, workarounds, and eventual abandonment. The AI works technically but fails organizationally because people do not trust it, understand it, or believe it helps them succeed.

Future-Proofing Operational AI Systems

Technology evolves rapidly while business operations require stability. The end to end ai architecture you build today must adapt to new algorithms, different data sources, evolving regulatory requirements, and changing business models without requiring complete rebuilds.

Future-proof design principles include:

- Modular architecture where components can be upgraded independently without cascading failures

- Open standards for data formats, model APIs, and integration protocols

- Version management that supports running multiple model versions simultaneously during transitions

- Extensibility allowing new data sources and model types to be added without core system changes

- Graceful degradation when AI components fail, allowing manual processes to continue

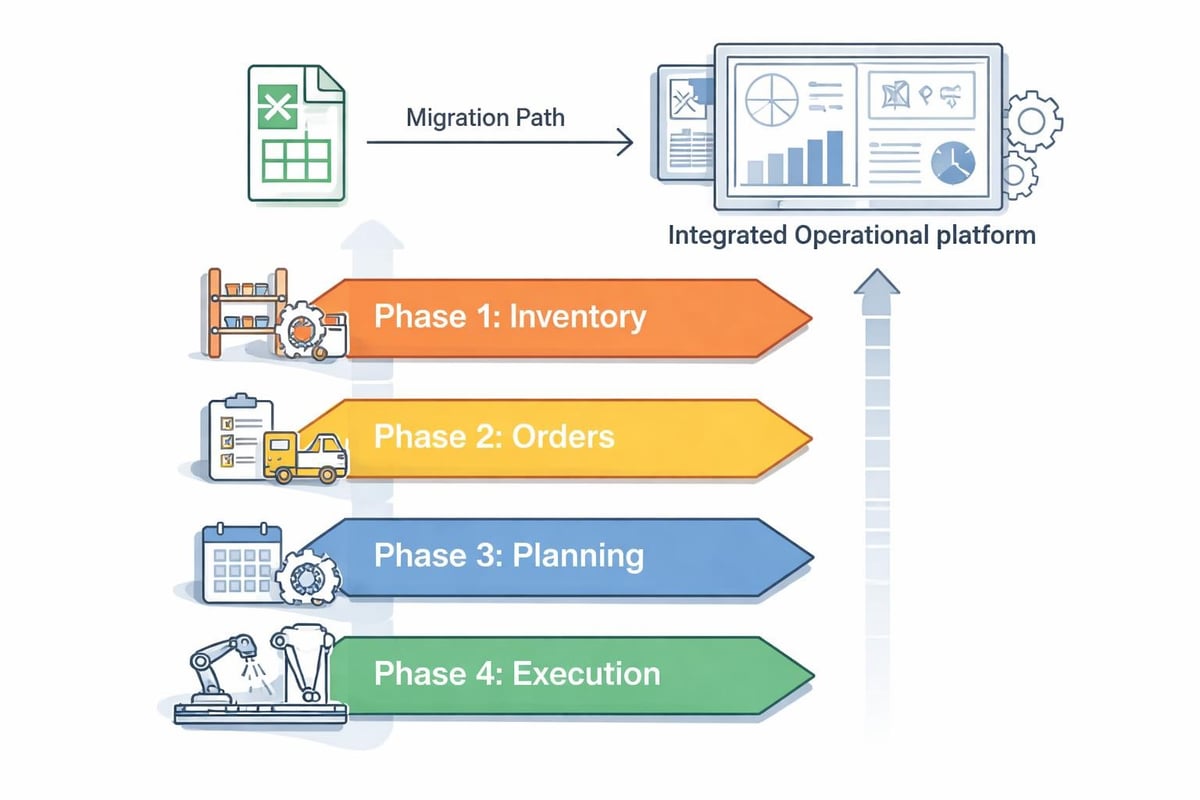

The modular operations platform concept applies this thinking to business operations: start with the modules you need today and expand when it adds value. The same philosophy extends to AI capabilities-embed automation where it delivers clear ROI and preserve flexibility for future enhancement.

As AI technology matures, new capabilities like large language models for natural language interfaces, computer vision for automated inspection, and reinforcement learning for dynamic optimization become practical. Systems designed with clear abstraction layers can incorporate these advances without disrupting proven workflows.

End to end ai transforms artificial intelligence from experimental technology into operational infrastructure by connecting every stage of the AI lifecycle into unified workflows that learn and improve continuously. The business value comes not from model sophistication but from seamless integration between prediction and execution, planning and adaptation, automation and oversight. Neovara Operations Center brings this integration to multi-workflow businesses through a modular platform that unifies transport, orders, assets, field service, and planning in one command layer where AI automation reduces manual steps and keeps work moving when reality changes. Book a demo to see how end to end operations and intelligent automation work together in practice.